Compare commits

438 Commits

1.0.84

...

feature/ag

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

7ce89a3e89 | ||

|

|

fe43e87e1a | ||

|

|

d55d1c0fd9 | ||

|

|

f0e5a9e172 | ||

|

|

866c08f590 | ||

|

|

bec634dd37 | ||

|

|

cbf65fef48 | ||

|

|

6df57c4a23 | ||

|

|

da6d20d118 | ||

|

|

64c629775a | ||

|

|

46f2d62fed | ||

|

|

b91943066e | ||

|

|

58d73637bb | ||

|

|

0ffaac65d4 | ||

|

|

4ce9c4c191 | ||

|

|

4681b1986f | ||

|

|

89d42fd469 | ||

|

|

8a903f695e | ||

|

|

3a20af25f1 | ||

|

|

24046b6660 | ||

|

|

453112d09f | ||

|

|

42a0034728 | ||

|

|

d8d660be8d | ||

|

|

c5a2d583c7 | ||

|

|

cd656264cf | ||

|

|

272c8204c7 | ||

|

|

23c7321b63 | ||

|

|

e24a38f89f | ||

|

|

5847cfc94c | ||

|

|

05d5958307 | ||

|

|

ffa81cb7df | ||

|

|

bd231e639d | ||

|

|

73a0a48eab | ||

|

|

fd18a247d7 | ||

|

|

501c35fa44 | ||

|

|

f114181fe2 | ||

|

|

b347faff83 | ||

|

|

16e14ddd1c | ||

|

|

2117d42f60 | ||

|

|

79b8dbaefd | ||

|

|

04f5c28052 | ||

|

|

a4da036389 | ||

|

|

a73cf015d6 | ||

|

|

35bf3de62a | ||

|

|

6fce6ec777 | ||

|

|

61fe8b2b56 | ||

|

|

dd1022a990 | ||

|

|

36f7d14c68 | ||

|

|

e9a4599714 | ||

|

|

e06507a06b | ||

|

|

195b54e07e | ||

|

|

b05242873d | ||

|

|

eccb052de8 | ||

|

|

077dd36b3c | ||

|

|

6405b36854 | ||

|

|

99f413282c | ||

|

|

b3e5af30ab | ||

|

|

b8b2bb5f7a | ||

|

|

806fa726c1 | ||

|

|

1f0ea0ac4d | ||

|

|

0b271383fd | ||

|

|

11e77a9151 | ||

|

|

143f7f47a2 | ||

|

|

3ea9d5021e | ||

|

|

031076b498 | ||

|

|

305bab9819 | ||

|

|

f5ca5bdca3 | ||

|

|

fe82550637 | ||

|

|

53e4b413ec | ||

|

|

07878ddf36 | ||

|

|

cc232c1383 | ||

|

|

52c60d9d18 | ||

|

|

d400998fa2 | ||

|

|

827bedb696 | ||

|

|

54c48cb106 | ||

|

|

944178abce | ||

|

|

9defbb7991 | ||

|

|

c857453654 | ||

|

|

d3495c1def | ||

|

|

a9f6d4671c | ||

|

|

5ef1ea906d | ||

|

|

d6f5b5c313 | ||

|

|

f0c6ce2b8d | ||

|

|

f60491885f | ||

|

|

7a2a307a0a | ||

|

|

f6d52114b8 | ||

|

|

cac94e0faf | ||

|

|

e4c14863fb | ||

|

|

6147c7d697 | ||

|

|

751b11881f | ||

|

|

5da9bc8626 | ||

|

|

90613c0ec1 | ||

|

|

cb0f71ba63 | ||

|

|

d118958ac1 | ||

|

|

8df36a9b98 | ||

|

|

5b71c8eacf | ||

|

|

0aeabcc963 | ||

|

|

7788f954d6 | ||

|

|

fe87c4ccc8 | ||

|

|

4f02b299c3 | ||

|

|

274da54d50 | ||

|

|

ca400cfbba | ||

|

|

e437ba8128 | ||

|

|

5e9f515c66 | ||

|

|

5bfdfa2aa1 | ||

|

|

14666357bd | ||

|

|

b53a391492 | ||

|

|

dab2111f10 | ||

|

|

c15048954c | ||

|

|

ba3d8925f2 | ||

|

|

300f1691e7 | ||

|

|

a1b5756107 | ||

|

|

b1d3ee54a5 | ||

|

|

4df4ea1930 | ||

|

|

2f83a5c98c | ||

|

|

cae8b6e1e4 | ||

|

|

da21bdcf85 | ||

|

|

ac92766c6c | ||

|

|

329381b1ee | ||

|

|

fc1f842f6b | ||

|

|

70519e3309 | ||

|

|

656802b343 | ||

|

|

44c6236243 | ||

|

|

51501cf5e1 | ||

|

|

9dabf678e5 | ||

|

|

e2231a0dbd | ||

|

|

f4ce7e2ec6 | ||

|

|

9fd908bb71 | ||

|

|

c3eedb4785 | ||

|

|

f6f4dfb9f7 | ||

|

|

739f5213a6 | ||

|

|

79ebce842d | ||

|

|

bafd84b0c9 | ||

|

|

cad2654fa2 | ||

|

|

dcde3d7738 | ||

|

|

5c76ab69a5 | ||

|

|

7bd3bed5e4 | ||

|

|

356bd0b81f | ||

|

|

898c34a157 | ||

|

|

d037f45f94 | ||

|

|

1e487fb6ea | ||

|

|

2ad0b03863 | ||

|

|

8e2be82a2b | ||

|

|

1d4d9fa48c | ||

|

|

704ed81ad9 | ||

|

|

8a7554efb8 | ||

|

|

dcc6cdf20f | ||

|

|

390b1e4ba3 | ||

|

|

d48fd02a99 | ||

|

|

05bb69db58 | ||

|

|

fb8a88d8f9 | ||

|

|

4f418794fd | ||

|

|

52d68ad80a | ||

|

|

7da308b563 | ||

|

|

e24ff2c652 | ||

|

|

94aa7669d9 | ||

|

|

44e590caa3 | ||

|

|

37e429cfca | ||

|

|

599874eba2 | ||

|

|

b4c1899561 | ||

|

|

faf847a742 | ||

|

|

77c9a42b2d | ||

|

|

9ea10b8455 | ||

|

|

e8a7675845 | ||

|

|

e29ae93c53 | ||

|

|

3ee93f9534 | ||

|

|

bf8878d40c | ||

|

|

fc077819a9 | ||

|

|

281eb6560d | ||

|

|

74fbafeb3f | ||

|

|

56c3f90559 | ||

|

|

27a2d68e9b | ||

|

|

085fe3a9b0 | ||

|

|

bb1adacc7f | ||

|

|

fdc2e39646 | ||

|

|

773cb4af78 | ||

|

|

9036d9e7c6 | ||

|

|

fddd753a48 | ||

|

|

b42cee36ae | ||

|

|

4694d31e2c | ||

|

|

4abecd1d76 | ||

|

|

5ae999078c | ||

|

|

538db8f484 | ||

|

|

ca79831c1e | ||

|

|

255173c6ac | ||

|

|

8d197f963d | ||

|

|

3c5107f113 | ||

|

|

80ee58ba1e | ||

|

|

d219168451 | ||

|

|

977b85f8ea | ||

|

|

9b31fa5390 | ||

|

|

0e0c83a065 | ||

|

|

3ddfd4fb4e | ||

|

|

a093be5581 | ||

|

|

cfd784b919 | ||

|

|

a3ebe6c992 | ||

|

|

4eefb81a0b | ||

|

|

f914707536 | ||

|

|

931d5dd520 | ||

|

|

b216d1b647 | ||

|

|

4de3d98b79 | ||

|

|

6fa5c323b8 | ||

|

|

c754bd11da | ||

|

|

5f5fa8ecae | ||

|

|

6ae6f4f25b | ||

|

|

6b69284497 | ||

|

|

52d7dbd7ce | ||

|

|

2070753c5e | ||

|

|

c1f7fb78d9 | ||

|

|

b71cf87ec2 | ||

|

|

0965c3046e | ||

|

|

8e50bc8d75 | ||

|

|

be5b77c4ac | ||

|

|

6078db6157 | ||

|

|

97f457575d | ||

|

|

4df59d8862 | ||

|

|

621004e6d8 | ||

|

|

74b2ab13e7 | ||

|

|

8fef5148d9 | ||

|

|

b91f6e6b25 | ||

|

|

0ec20d14b0 | ||

|

|

c705432510 | ||

|

|

de379d73b2 | ||

|

|

ad1bf658a9 | ||

|

|

ab9b95dbed | ||

|

|

7f37233c72 | ||

|

|

77aa3b85d1 | ||

|

|

863c978d12 | ||

|

|

cc950b893e | ||

|

|

f085b633af | ||

|

|

3d0b3eeb7f | ||

|

|

4a69df4476 | ||

|

|

639249d346 | ||

|

|

8d9ee006ee | ||

|

|

14642e9856 | ||

|

|

3efd7712be | ||

|

|

d2417b0082 | ||

|

|

bae903f8ca | ||

|

|

1e17e258b6 | ||

|

|

cc13a580fa | ||

|

|

54d8cf4cd9 | ||

|

|

03da285d1c | ||

|

|

339f788832 | ||

|

|

ccf738127d | ||

|

|

b90c7a027a | ||

|

|

2b0506a393 | ||

|

|

81278be964 | ||

|

|

cef4a00d17 | ||

|

|

9083c3ace3 | ||

|

|

79923451d0 | ||

|

|

02168e6fd9 | ||

|

|

a3debc0983 | ||

|

|

9a0908f504 | ||

|

|

4d03673d8c | ||

|

|

c4ebd5cd31 | ||

|

|

43361ff271 | ||

|

|

f1d9fc154a | ||

|

|

9811d9f7fd | ||

|

|

63e2fc2c49 | ||

|

|

63b3bcb71e | ||

|

|

9d9bc53e0b | ||

|

|

8fd203931d | ||

|

|

9c181486a5 | ||

|

|

2b036c8a62 | ||

|

|

ec0ebc9654 | ||

|

|

c2e4e3fa7f | ||

|

|

d6a57560fe | ||

|

|

a4c47216f5 | ||

|

|

202fbef8b7 | ||

|

|

48d0a494ee | ||

|

|

b2e1330ec0 | ||

|

|

a2d95a052a | ||

|

|

d86217dd0b | ||

|

|

70b4a7961a | ||

|

|

0248f21654 | ||

|

|

252ea50717 | ||

|

|

1155a9be9f | ||

|

|

bc87d0c7ec | ||

|

|

a895e3a0ea | ||

|

|

68acedfd0a | ||

|

|

be08f11027 | ||

|

|

b9b18271a1 | ||

|

|

12aa38cab0 | ||

|

|

b05f1d9b12 | ||

|

|

bc2a931586 | ||

|

|

1bda78e35b | ||

|

|

e7e71f6421 | ||

|

|

57f874921c | ||

|

|

2d7902167b | ||

|

|

407b7eb280 | ||

|

|

e62a7511db | ||

|

|

c904a69b09 | ||

|

|

11bf20c405 | ||

|

|

c3273ea8ca | ||

|

|

f6a29842b5 | ||

|

|

3781ea7a51 | ||

|

|

6f1da25f7e | ||

|

|

e74ef7115c | ||

|

|

c9db51a71e | ||

|

|

681a692ca9 | ||

|

|

9a6065fdb3 | ||

|

|

e245d9633f | ||

|

|

590364dd53 | ||

|

|

bb4e7477bd | ||

|

|

c33c1c8315 | ||

|

|

05eecdccaa | ||

|

|

26bb2f9bab | ||

|

|

bbafc95577 | ||

|

|

bee09aa626 | ||

|

|

8aa6e3b066 | ||

|

|

d40912c638 | ||

|

|

ba0444194f | ||

|

|

ac3f505aa6 | ||

|

|

7e5ca53fda | ||

|

|

2b0238b9e8 | ||

|

|

469a0fe491 | ||

|

|

983a3617f0 | ||

|

|

b638b981c9 | ||

|

|

fe5078891f | ||

|

|

44b4de9ed9 | ||

|

|

854c0b4acf | ||

|

|

18c5d06a6c | ||

|

|

22b403d0b0 | ||

|

|

ee0493eb57 | ||

|

|

f966b4b74e | ||

|

|

1dadbacd2c | ||

|

|

714c16c216 | ||

|

|

cf2c510b23 | ||

|

|

a0bcc47b2e | ||

|

|

57ecbc2572 | ||

|

|

99beb3e6d0 | ||

|

|

7756eed9a0 | ||

|

|

b795117f0a | ||

|

|

0d091d1826 | ||

|

|

9fd77a6743 | ||

|

|

57a962148b | ||

|

|

3c64f2099f | ||

|

|

a9c7f4e5e0 | ||

|

|

e7f58d4e0d | ||

|

|

138497b30f | ||

|

|

3a792090e2 | ||

|

|

7ef859bba5 | ||

|

|

3c30593e1e | ||

|

|

98b794ca2b | ||

|

|

bc90a15a68 | ||

|

|

bb4689e94b | ||

|

|

6739c93edc | ||

|

|

c8c30d703b | ||

|

|

419b0369c9 | ||

|

|

d9a94b95e1 | ||

|

|

db1db948c8 | ||

|

|

41ad780224 | ||

|

|

cb58c6a9b0 | ||

|

|

d1115e0b35 | ||

|

|

740bd3750b | ||

|

|

c9aa6c9e08 | ||

|

|

71bba6ee0d | ||

|

|

27b2201ff9 | ||

|

|

23d23c4ad7 | ||

|

|

e409ff1cf9 | ||

|

|

9a12cebb68 | ||

|

|

24f5bc4fec | ||

|

|

d7c313417b | ||

|

|

b67b4c7eb5 | ||

|

|

ab70201844 | ||

|

|

ac8a40a017 | ||

|

|

1ac65f821b | ||

|

|

d603c4b94b | ||

|

|

a418cbc1dc | ||

|

|

785dd12730 | ||

|

|

dda807d818 | ||

|

|

a06a4025fa | ||

| 761fbc3398 | |||

| a96dc11679 | |||

| b2b3febdaa | |||

|

|

f27bea11d5 | ||

|

|

9503451d5a | ||

|

|

04bae4ca6a | ||

|

|

3e33b8df62 | ||

|

|

a494053263 | ||

|

|

260c57ca84 | ||

|

|

db008de0ca | ||

|

|

1b38466f44 | ||

|

|

26ec00dab8 | ||

|

|

5e6971cc4a | ||

|

|

8b3417ecda | ||

|

|

35f5f34196 | ||

|

|

d8c3edd55f | ||

|

|

7ffbc5d3f2 | ||

|

|

c4b7830614 | ||

|

|

69f6fd81cf | ||

|

|

b6a293add7 | ||

|

|

25694a8bc9 | ||

|

|

12bb10392e | ||

|

|

e9c33ab0b2 | ||

|

|

903a8176cd | ||

|

|

4a91918e84 | ||

|

|

ff3344616c | ||

|

|

726fea5b74 | ||

|

|

a09f1362e9 | ||

|

|

4ef0821932 | ||

|

|

2d3cf228cb | ||

|

|

5b3713c69e | ||

|

|

e9486cbb8e | ||

|

|

057f0babeb | ||

|

|

da146640ca | ||

|

|

82be761b86 | ||

|

|

9c3fc49df1 | ||

|

|

5f19eb17ac | ||

|

|

ecb04d6d82 | ||

|

|

3fc7e9423c | ||

|

|

405a08b330 | ||

|

|

921f745435 | ||

|

|

bedfec6bf9 | ||

|

|

afa09e87a5 | ||

|

|

baf2320ea6 | ||

|

|

948a7444fb | ||

|

|

ec0eb8b469 | ||

|

|

8f33de7e59 | ||

|

|

8c59e6511b | ||

|

|

b93fc7623a | ||

|

|

bd1a57c7e0 | ||

|

|

7fabead249 | ||

|

|

268a973d5e | ||

|

|

d949a3cb69 | ||

|

|

e2443ed68a | ||

|

|

37193b1f5b | ||

|

|

e33071ae38 | ||

|

|

fffc8dc526 | ||

|

|

def950cc9c | ||

|

|

f4db7ca326 | ||

|

|

18760250ea | ||

|

|

233597efd1 | ||

|

|

cec9f29eb7 | ||

|

|

20cb92a418 | ||

|

|

b0dc38954b | ||

|

|

1479d0a494 | ||

|

|

b328daee43 |

10

.github/CODEOWNERS

vendored

Normal file

@@ -0,0 +1,10 @@

|

||||

# See https://docs.github.com/repositories/managing-your-repositorys-settings-and-features/customizing-your-repository/about-code-owners

|

||||

|

||||

# Default owners for everything in the repo

|

||||

* @amithkoujalgi

|

||||

|

||||

# Example for scoping ownership (uncomment and adjust as teams evolve)

|

||||

# /docs/ @amithkoujalgi

|

||||

# /src/ @amithkoujalgi

|

||||

|

||||

|

||||

59

.github/ISSUE_TEMPLATE/bug_report.yml

vendored

Normal file

@@ -0,0 +1,59 @@

|

||||

name: Bug report

|

||||

description: File a bug report

|

||||

labels: [bug]

|

||||

assignees: []

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

Thanks for taking the time to fill out this bug report!

|

||||

- type: input

|

||||

id: version

|

||||

attributes:

|

||||

label: ollama4j version

|

||||

description: e.g., 1.1.0

|

||||

placeholder: 1.1.0

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: java

|

||||

attributes:

|

||||

label: Java version

|

||||

description: Output of `java -version`

|

||||

placeholder: 11/17/21

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: environment

|

||||

attributes:

|

||||

label: Environment

|

||||

description: OS, build tool, Docker/Testcontainers, etc.

|

||||

placeholder: macOS 13, Maven 3.9.x, Docker 24.x

|

||||

- type: textarea

|

||||

id: what-happened

|

||||

attributes:

|

||||

label: What happened?

|

||||

description: Also tell us what you expected to happen

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: steps

|

||||

attributes:

|

||||

label: Steps to reproduce

|

||||

description: Be as specific as possible

|

||||

placeholder: |

|

||||

1. Setup ...

|

||||

2. Run ...

|

||||

3. Observe ...

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: logs

|

||||

attributes:

|

||||

label: Relevant logs/stack traces

|

||||

render: shell

|

||||

- type: textarea

|

||||

id: additional

|

||||

attributes:

|

||||

label: Additional context

|

||||

|

||||

6

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

@@ -0,0 +1,6 @@

|

||||

blank_issues_enabled: false

|

||||

contact_links:

|

||||

- name: Questions / Discussions

|

||||

url: https://github.com/ollama4j/ollama4j/discussions

|

||||

about: Ask questions and discuss ideas here

|

||||

|

||||

31

.github/ISSUE_TEMPLATE/feature_request.yml

vendored

Normal file

@@ -0,0 +1,31 @@

|

||||

name: Feature request

|

||||

description: Suggest an idea or enhancement

|

||||

labels: [enhancement]

|

||||

assignees: []

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

Thanks for suggesting an improvement!

|

||||

- type: textarea

|

||||

id: problem

|

||||

attributes:

|

||||

label: Is your feature request related to a problem?

|

||||

description: A clear and concise description of the problem

|

||||

placeholder: I'm frustrated when...

|

||||

- type: textarea

|

||||

id: solution

|

||||

attributes:

|

||||

label: Describe the solution you'd like

|

||||

placeholder: I'd like...

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: alternatives

|

||||

attributes:

|

||||

label: Describe alternatives you've considered

|

||||

- type: textarea

|

||||

id: context

|

||||

attributes:

|

||||

label: Additional context

|

||||

|

||||

34

.github/PULL_REQUEST_TEMPLATE.md

vendored

Normal file

@@ -0,0 +1,34 @@

|

||||

## Description

|

||||

|

||||

Describe what this PR does and why.

|

||||

|

||||

## Type of change

|

||||

|

||||

- [ ] feat: New feature

|

||||

- [ ] fix: Bug fix

|

||||

- [ ] docs: Documentation update

|

||||

- [ ] refactor: Refactoring

|

||||

- [ ] test: Tests only

|

||||

- [ ] build/ci: Build or CI changes

|

||||

|

||||

## How has this been tested?

|

||||

|

||||

Explain the testing done. Include commands, screenshots, logs.

|

||||

|

||||

## Checklist

|

||||

|

||||

- [ ] I ran `pre-commit run -a` locally

|

||||

- [ ] `make build` succeeds locally

|

||||

- [ ] Unit/integration tests added or updated as needed

|

||||

- [ ] Docs updated (README/docs site) if user-facing changes

|

||||

- [ ] PR title follows Conventional Commits

|

||||

|

||||

## Breaking changes

|

||||

|

||||

List any breaking changes and migration notes.

|

||||

|

||||

## Related issues

|

||||

|

||||

Fixes #

|

||||

|

||||

|

||||

34

.github/dependabot.yml

vendored

Normal file

@@ -0,0 +1,34 @@

|

||||

# To get started with Dependabot version updates, you'll need to specify which

|

||||

## package ecosystems to update and where the package manifests are located.

|

||||

## Please see the documentation for all configuration options:

|

||||

## https://docs.github.com/code-security/dependabot/dependabot-version-updates/configuration-options-for-the-dependabot.yml-file

|

||||

#

|

||||

#version: 2

|

||||

#updates:

|

||||

# - package-ecosystem: "" # See documentation for possible values

|

||||

# directory: "/" # Location of package manifests

|

||||

# schedule:

|

||||

# interval: "weekly"

|

||||

|

||||

|

||||

version: 2

|

||||

updates:

|

||||

- package-ecosystem: "maven"

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

open-pull-requests-limit: 5

|

||||

labels: ["dependencies"]

|

||||

- package-ecosystem: "github-actions"

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

open-pull-requests-limit: 5

|

||||

labels: ["dependencies"]

|

||||

- package-ecosystem: "npm"

|

||||

directory: "/docs"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

open-pull-requests-limit: 5

|

||||

labels: ["dependencies"]

|

||||

#

|

||||

34

.github/workflows/build-on-pr-create.yml

vendored

@@ -1,34 +0,0 @@

|

||||

# This workflow will build a package using Maven and then publish it to GitHub packages when a release is created

|

||||

# For more information see: https://github.com/actions/setup-java/blob/main/docs/advanced-usage.md#apache-maven-with-a-settings-path

|

||||

|

||||

name: Build on PR Create

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [ opened, reopened ]

|

||||

branches: [ "main" ]

|

||||

|

||||

|

||||

jobs:

|

||||

build:

|

||||

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

contents: read

|

||||

packages: write

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Set up JDK 11

|

||||

uses: actions/setup-java@v3

|

||||

with:

|

||||

java-version: '11'

|

||||

distribution: 'adopt-hotspot'

|

||||

server-id: github # Value of the distributionManagement/repository/id field of the pom.xml

|

||||

settings-path: ${{ github.workspace }} # location for the settings.xml file

|

||||

|

||||

- name: Build with Maven

|

||||

run: mvn --file pom.xml -U clean package

|

||||

|

||||

- name: Run Tests

|

||||

run: mvn --file pom.xml -U clean test -Punit-tests

|

||||

59

.github/workflows/build-on-pull-request.yml

vendored

Normal file

@@ -0,0 +1,59 @@

|

||||

name: Build and Test on Pull Request

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [opened, reopened, synchronize]

|

||||

branches:

|

||||

- main

|

||||

paths:

|

||||

- 'src/**'

|

||||

- 'pom.xml'

|

||||

|

||||

concurrency:

|

||||

group: ${{ github.workflow }}-${{ github.event.pull_request.number || github.ref }}

|

||||

cancel-in-progress: true

|

||||

|

||||

jobs:

|

||||

build:

|

||||

name: Build Java Project

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

contents: read

|

||||

|

||||

environment:

|

||||

name: github-pages

|

||||

url: ${{ steps.deployment.outputs.page_url }}

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- name: Set up JDK 21

|

||||

uses: actions/setup-java@v5

|

||||

with:

|

||||

java-version: '21'

|

||||

distribution: 'oracle'

|

||||

server-id: github

|

||||

settings-path: ${{ github.workspace }}

|

||||

|

||||

- name: Build with Maven

|

||||

run: mvn --file pom.xml -U clean package

|

||||

|

||||

run-tests:

|

||||

name: Run Unit and Integration Tests

|

||||

needs: build

|

||||

uses: ./.github/workflows/run-tests.yml

|

||||

with:

|

||||

branch: ${{ github.head_ref || github.ref_name }}

|

||||

|

||||

build-docs:

|

||||

name: Build Documentation

|

||||

needs: [build, run-tests]

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- name: Use Node.js

|

||||

uses: actions/setup-node@v5

|

||||

with:

|

||||

node-version: '20.x'

|

||||

- run: cd docs && npm ci

|

||||

- run: cd docs && npm run build

|

||||

44

.github/workflows/codeql.yml

vendored

Normal file

@@ -0,0 +1,44 @@

|

||||

name: CodeQL

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [ main ]

|

||||

pull_request:

|

||||

branches: [ main ]

|

||||

schedule:

|

||||

- cron: '0 3 * * 1'

|

||||

|

||||

jobs:

|

||||

analyze:

|

||||

name: Analyze

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

actions: read

|

||||

contents: read

|

||||

security-events: write

|

||||

strategy:

|

||||

fail-fast: false

|

||||

matrix:

|

||||

language: [ 'java', 'javascript' ]

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v5

|

||||

|

||||

- name: Set up JDK

|

||||

if: matrix.language == 'java'

|

||||

uses: actions/setup-java@v5

|

||||

with:

|

||||

distribution: oracle

|

||||

java-version: '21'

|

||||

|

||||

- name: Initialize CodeQL

|

||||

uses: github/codeql-action/init@v3

|

||||

with:

|

||||

languages: ${{ matrix.language }}

|

||||

|

||||

- name: Autobuild

|

||||

uses: github/codeql-action/autobuild@v3

|

||||

|

||||

- name: Perform CodeQL Analysis

|

||||

uses: github/codeql-action/analyze@v3

|

||||

|

||||

10

.github/workflows/gh-mvn-publish.yml

vendored

@@ -13,12 +13,12 @@ jobs:

|

||||

packages: write

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Set up JDK 17

|

||||

uses: actions/setup-java@v3

|

||||

- uses: actions/checkout@v5

|

||||

- name: Set up JDK 21

|

||||

uses: actions/setup-java@v5

|

||||

with:

|

||||

java-version: '17'

|

||||

distribution: 'temurin'

|

||||

java-version: '21'

|

||||

distribution: 'oracle'

|

||||

server-id: github

|

||||

settings-path: ${{ github.workspace }}

|

||||

|

||||

|

||||

24

.github/workflows/label-issue-stale.yml

vendored

Normal file

@@ -0,0 +1,24 @@

|

||||

name: Mark stale issues

|

||||

|

||||

on:

|

||||

workflow_dispatch: # for manual run

|

||||

schedule:

|

||||

- cron: '0 0 * * *' # Runs every day at midnight

|

||||

|

||||

permissions:

|

||||

contents: write # only for delete-branch option

|

||||

issues: write

|

||||

|

||||

jobs:

|

||||

stale:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Mark stale issues

|

||||

uses: actions/stale@v10

|

||||

with:

|

||||

repo-token: ${{ github.token }}

|

||||

days-before-stale: 15

|

||||

stale-issue-message: 'This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs.'

|

||||

days-before-close: 7

|

||||

stale-issue-label: 'stale'

|

||||

exempt-issue-labels: 'pinned,security'

|

||||

10

.github/workflows/maven-publish.yml

vendored

@@ -24,13 +24,13 @@ jobs:

|

||||

packages: write

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- uses: actions/checkout@v5

|

||||

|

||||

- name: Set up JDK 17

|

||||

uses: actions/setup-java@v3

|

||||

- name: Set up JDK 21

|

||||

uses: actions/setup-java@v5

|

||||

with:

|

||||

java-version: '17'

|

||||

distribution: 'temurin'

|

||||

java-version: '21'

|

||||

distribution: 'oracle'

|

||||

server-id: github # Value of the distributionManagement/repository/id field of the pom.xml

|

||||

settings-path: ${{ github.workspace }} # location for the settings.xml file

|

||||

|

||||

|

||||

30

.github/workflows/pre-commit.yml

vendored

Normal file

@@ -0,0 +1,30 @@

|

||||

name: Pre-commit Check on PR

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

types: [opened, reopened, synchronize]

|

||||

branches:

|

||||

- main

|

||||

|

||||

#on:

|

||||

# pull_request:

|

||||

# branches: [ main ]

|

||||

# push:

|

||||

# branches: [ main ]

|

||||

|

||||

jobs:

|

||||

run:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v5

|

||||

- uses: actions/setup-python@v6

|

||||

with:

|

||||

python-version: '3.x'

|

||||

- name: Install pre-commit

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install pre-commit

|

||||

# - name: Run pre-commit

|

||||

# run: |

|

||||

# pre-commit run --all-files --show-diff-on-failure

|

||||

|

||||

24

.github/workflows/publish-docs.yml

vendored

@@ -1,5 +1,5 @@

|

||||

# Simple workflow for deploying static content to GitHub Pages

|

||||

name: Deploy Docs to GH Pages

|

||||

name: Publish Docs to GH Pages

|

||||

|

||||

on:

|

||||

release:

|

||||

@@ -29,18 +29,18 @@ jobs:

|

||||

name: github-pages

|

||||

url: ${{ steps.deployment.outputs.page_url }}

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- name: Set up JDK 11

|

||||

uses: actions/setup-java@v3

|

||||

- uses: actions/checkout@v5

|

||||

- name: Set up JDK 21

|

||||

uses: actions/setup-java@v5

|

||||

with:

|

||||

java-version: '11'

|

||||

distribution: 'adopt-hotspot'

|

||||

java-version: '21'

|

||||

distribution: 'oracle'

|

||||

server-id: github # Value of the distributionManagement/repository/id field of the pom.xml

|

||||

settings-path: ${{ github.workspace }} # location for the settings.xml file

|

||||

|

||||

- uses: actions/checkout@v4

|

||||

- uses: actions/checkout@v5

|

||||

- name: Use Node.js

|

||||

uses: actions/setup-node@v3

|

||||

uses: actions/setup-node@v5

|

||||

with:

|

||||

node-version: '20.x'

|

||||

- run: cd docs && npm ci

|

||||

@@ -57,18 +57,18 @@ jobs:

|

||||

run: mvn --file pom.xml -U clean package && cp -r ./target/apidocs/. ./docs/build/apidocs

|

||||

|

||||

- name: Doxygen Action

|

||||

uses: mattnotmitt/doxygen-action@v1.1.0

|

||||

uses: mattnotmitt/doxygen-action@v1.12.0

|

||||

with:

|

||||

doxyfile-path: "./Doxyfile"

|

||||

working-directory: "."

|

||||

|

||||

- name: Setup Pages

|

||||

uses: actions/configure-pages@v3

|

||||

uses: actions/configure-pages@v5

|

||||

- name: Upload artifact

|

||||

uses: actions/upload-pages-artifact@v2

|

||||

uses: actions/upload-pages-artifact@v4

|

||||

with:

|

||||

# Upload entire repository

|

||||

path: './docs/build/.'

|

||||

- name: Deploy to GitHub Pages

|

||||

id: deployment

|

||||

uses: actions/deploy-pages@v2

|

||||

uses: actions/deploy-pages@v4

|

||||

|

||||

54

.github/workflows/run-tests.yml

vendored

Normal file

@@ -0,0 +1,54 @@

|

||||

name: Run Tests

|

||||

|

||||

on:

|

||||

# push:

|

||||

# branches:

|

||||

# - main

|

||||

|

||||

workflow_call:

|

||||

inputs:

|

||||

branch:

|

||||

description: 'Branch name to run the tests on'

|

||||

required: true

|

||||

default: 'main'

|

||||

type: string

|

||||

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

branch:

|

||||

description: 'Branch name to run the tests on'

|

||||

required: true

|

||||

default: 'main'

|

||||

type: string

|

||||

|

||||

jobs:

|

||||

run-tests:

|

||||

name: Unit and Integration Tests

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- name: Checkout target branch

|

||||

uses: actions/checkout@v5

|

||||

with:

|

||||

ref: ${{ github.event.inputs.branch }}

|

||||

|

||||

- name: Set up Ollama

|

||||

run: |

|

||||

curl -fsSL https://ollama.com/install.sh | sh

|

||||

|

||||

- name: Set up JDK 21

|

||||

uses: actions/setup-java@v5

|

||||

with:

|

||||

java-version: '21'

|

||||

distribution: 'oracle'

|

||||

server-id: github

|

||||

settings-path: ${{ github.workspace }}

|

||||

|

||||

- name: Run unit tests

|

||||

run: make unit-tests

|

||||

|

||||

- name: Run integration tests

|

||||

run: make integration-tests-basic

|

||||

env:

|

||||

USE_EXTERNAL_OLLAMA_HOST: "true"

|

||||

OLLAMA_HOST: "http://localhost:11434"

|

||||

33

.github/workflows/stale.yml

vendored

Normal file

@@ -0,0 +1,33 @@

|

||||

name: Mark stale issues and PRs

|

||||

|

||||

on:

|

||||

schedule:

|

||||

- cron: '0 2 * * *'

|

||||

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

|

||||

jobs:

|

||||

stale:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/stale@v10

|

||||

with:

|

||||

days-before-stale: 60

|

||||

days-before-close: 14

|

||||

stale-issue-label: 'stale'

|

||||

stale-pr-label: 'stale'

|

||||

exempt-issue-labels: 'pinned,security'

|

||||

exempt-pr-labels: 'pinned,security'

|

||||

stale-issue-message: >

|

||||

This issue has been automatically marked as stale because it has not had

|

||||

recent activity. It will be closed if no further activity occurs.

|

||||

close-issue-message: >

|

||||

Closing this stale issue. Feel free to reopen if this is still relevant.

|

||||

stale-pr-message: >

|

||||

This pull request has been automatically marked as stale due to inactivity.

|

||||

It will be closed if no further activity occurs.

|

||||

close-pr-message: >

|

||||

Closing this stale pull request. Please reopen when you're ready to continue.

|

||||

|

||||

2

.gitignore

vendored

@@ -41,4 +41,4 @@ pom.xml.*

|

||||

release.properties

|

||||

!.idea/icon.svg

|

||||

|

||||

src/main/java/io/github/ollama4j/localtests

|

||||

src/main/java/io/github/ollama4j/localtests

|

||||

|

||||

46

.pre-commit-config.yaml

Normal file

@@ -0,0 +1,46 @@

|

||||

repos:

|

||||

|

||||

# pre-commit hooks

|

||||

- repo: https://github.com/pre-commit/pre-commit-hooks

|

||||

rev: "v6.0.0"

|

||||

hooks:

|

||||

- id: no-commit-to-branch

|

||||

args: ['--branch', 'main']

|

||||

- id: check-merge-conflict

|

||||

- id: check-added-large-files

|

||||

- id: check-yaml

|

||||

- id: check-xml

|

||||

- id: check-json

|

||||

- id: pretty-format-json

|

||||

args: ['--no-sort-keys', '--autofix', '--indent=4']

|

||||

- id: end-of-file-fixer

|

||||

exclude: \.json$

|

||||

files: \.java$|\.xml$

|

||||

- id: trailing-whitespace

|

||||

- id: mixed-line-ending

|

||||

|

||||

# for commit message formatting

|

||||

- repo: https://github.com/commitizen-tools/commitizen

|

||||

rev: v4.9.1

|

||||

hooks:

|

||||

- id: commitizen

|

||||

stages: [commit-msg]

|

||||

|

||||

- repo: local

|

||||

hooks:

|

||||

- id: format-code

|

||||

name: Format Code

|

||||

entry: make apply-formatting

|

||||

language: system

|

||||

always_run: true

|

||||

|

||||

# # for java code quality

|

||||

# - repo: https://github.com/gherynos/pre-commit-java

|

||||

# rev: v0.6.10

|

||||

# hooks:

|

||||

# - id: pmd

|

||||

# exclude: /test/

|

||||

# - id: cpd

|

||||

# exclude: /test/

|

||||

# - id: checkstyle

|

||||

# exclude: /test/

|

||||

9

CITATION.cff

Normal file

@@ -0,0 +1,9 @@

|

||||

cff-version: 1.2.0

|

||||

message: "If you use this software, please cite it as below."

|

||||

authors:

|

||||

- family-names: "Koujalgi"

|

||||

given-names: "Amith"

|

||||

title: "Ollama4j: A Java Library (Wrapper/Binding) for Ollama Server"

|

||||

version: "1.1.0"

|

||||

date-released: 2023-12-19

|

||||

url: "https://github.com/ollama4j/ollama4j"

|

||||

125

CONTRIBUTING.md

Normal file

@@ -0,0 +1,125 @@

|

||||

## Contributing to Ollama4j

|

||||

|

||||

Thanks for your interest in contributing! This guide explains how to set up your environment, make changes, and submit pull requests.

|

||||

|

||||

### Code of Conduct

|

||||

|

||||

By participating, you agree to abide by our [Code of Conduct](CODE_OF_CONDUCT.md).

|

||||

|

||||

### Quick Start

|

||||

|

||||

Prerequisites:

|

||||

|

||||

- Java 11+

|

||||

- Maven 3.8+

|

||||

- Docker (required for integration tests)

|

||||

- Make (for convenience targets)

|

||||

- pre-commit (for Git hooks)

|

||||

|

||||

Setup:

|

||||

|

||||

```bash

|

||||

# 1) Fork the repo and clone your fork

|

||||

git clone https://github.com/<your-username>/ollama4j.git

|

||||

cd ollama4j

|

||||

|

||||

# 2) Install and enable git hooks

|

||||

pre-commit install --hook-type pre-commit --hook-type commit-msg

|

||||

|

||||

# 3) Prepare dev environment (installs husk deps/tools if needed)

|

||||

make dev

|

||||

```

|

||||

|

||||

Build and test:

|

||||

|

||||

```bash

|

||||

# Build

|

||||

make build

|

||||

|

||||

# Run unit tests

|

||||

make unit-tests

|

||||

|

||||

# Run integration tests (requires Docker running)

|

||||

make integration-tests

|

||||

```

|

||||

|

||||

If you prefer raw Maven:

|

||||

|

||||

```bash

|

||||

# Unit tests profile

|

||||

mvn -P unit-tests clean test

|

||||

|

||||

# Integration tests profile (Docker required)

|

||||

mvn -P integration-tests -DskipUnitTests=true clean verify

|

||||

```

|

||||

|

||||

### Commit Style

|

||||

|

||||

We use Conventional Commits. Commit messages and PR titles should follow:

|

||||

|

||||

```

|

||||

<type>(optional scope): <short summary>

|

||||

|

||||

[optional body]

|

||||

[optional footer(s)]

|

||||

```

|

||||

|

||||

Common types: `feat`, `fix`, `docs`, `refactor`, `test`, `build`, `chore`.

|

||||

|

||||

Commit message formatting is enforced via `commitizen` through `pre-commit` hooks.

|

||||

|

||||

### Pre-commit Hooks

|

||||

|

||||

Before pushing, run:

|

||||

|

||||

```bash

|

||||

pre-commit run -a

|

||||

```

|

||||

|

||||

Hooks will check for merge conflicts, large files, YAML/XML/JSON validity, line endings, and basic formatting. Fix reported issues before opening a PR.

|

||||

|

||||

### Coding Guidelines

|

||||

|

||||

- Target Java 11+; match existing style and formatting.

|

||||

- Prefer clear, descriptive names over abbreviations.

|

||||

- Add Javadoc for public APIs and non-obvious logic.

|

||||

- Include meaningful tests for new features and bug fixes.

|

||||

- Avoid introducing new dependencies without discussion.

|

||||

|

||||

### Tests

|

||||

|

||||

- Unit tests: place under `src/test/java/**/unittests/`.

|

||||

- Integration tests: place under `src/test/java/**/integrationtests/` (uses Testcontainers; ensure Docker is running).

|

||||

|

||||

### Documentation

|

||||

|

||||

- Update `README.md`, Javadoc, and `docs/` when you change public APIs or user-facing behavior.

|

||||

- Add example snippets where useful. Keep API references consistent with the website content when applicable.

|

||||

|

||||

### Pull Requests

|

||||

|

||||

Before opening a PR:

|

||||

|

||||

- Ensure `make build` and all tests pass locally.

|

||||

- Run `pre-commit run -a` and fix any issues.

|

||||

- Keep PRs focused and reasonably small. Link related issues (e.g., "Closes #123").

|

||||

- Describe the change, rationale, and any trade-offs in the PR description.

|

||||

|

||||

Review process:

|

||||

|

||||

- Maintainers will review for correctness, scope, tests, and docs.

|

||||

- You may be asked to iterate; please be responsive to comments.

|

||||

|

||||

### Security

|

||||

|

||||

If you discover a security issue, please do not open a public issue. Instead, email the maintainer at `koujalgi.amith@gmail.com` with details.

|

||||

|

||||

### License

|

||||

|

||||

By contributing, you agree that your contributions will be licensed under the project’s [MIT License](LICENSE).

|

||||

|

||||

### Questions and Discussion

|

||||

|

||||

Have questions or ideas? Open a GitHub Discussion or issue. We welcome feedback and proposals!

|

||||

|

||||

|

||||

2

LICENSE

@@ -1,6 +1,6 @@

|

||||

MIT License

|

||||

|

||||

Copyright (c) 2023 Amith Koujalgi

|

||||

Copyright (c) 2023 Amith Koujalgi and contributors

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

|

||||

81

Makefile

@@ -1,28 +1,79 @@

|

||||

build:

|

||||

mvn -B clean install

|

||||

# Default target

|

||||

.PHONY: all

|

||||

all: dev build

|

||||

|

||||

unit-tests:

|

||||

mvn clean test -Punit-tests

|

||||

dev:

|

||||

@echo "Setting up dev environment..."

|

||||

@command -v pre-commit >/dev/null 2>&1 || { echo "Error: pre-commit is not installed. Please install it first."; exit 1; }

|

||||

@command -v docker >/dev/null 2>&1 || { echo "Error: docker is not installed. Please install it first."; exit 1; }

|

||||

@pre-commit install

|

||||

@pre-commit autoupdate

|

||||

@pre-commit install --install-hooks

|

||||

|

||||

integration-tests:

|

||||

mvn clean verify -Pintegration-tests

|

||||

check-formatting:

|

||||

@echo "\033[0;34mChecking code formatting...\033[0m"

|

||||

@mvn spotless:check

|

||||

|

||||

apply-formatting:

|

||||

@echo "\033[0;32mApplying code formatting...\033[0m"

|

||||

@mvn spotless:apply

|

||||

|

||||

build: apply-formatting

|

||||

@echo "\033[0;34mBuilding project (GPG skipped)...\033[0m"

|

||||

@mvn -B clean install -Dgpg.skip=true -Dmaven.javadoc.skip=true

|

||||

|

||||

full-build: apply-formatting

|

||||

@echo "\033[0;34mPerforming full build...\033[0m"

|

||||

@mvn -B clean install

|

||||

|

||||

unit-tests: apply-formatting

|

||||

@echo "\033[0;34mRunning unit tests...\033[0m"

|

||||

@mvn clean test -Punit-tests

|

||||

|

||||

integration-tests-all: apply-formatting

|

||||

@echo "\033[0;34mRunning integration tests (local - all)...\033[0m"

|

||||

@export USE_EXTERNAL_OLLAMA_HOST=false && mvn clean verify -Pintegration-tests

|

||||

|

||||

integration-tests-basic: apply-formatting

|

||||

@echo "\033[0;34mRunning integration tests (local - basic)...\033[0m"

|

||||

@export USE_EXTERNAL_OLLAMA_HOST=false && mvn clean verify -Pintegration-tests -Dit.test=WithAuth

|

||||

|

||||

integration-tests-remote: apply-formatting

|

||||

@echo "\033[0;34mRunning integration tests (remote - all)...\033[0m"

|

||||

@export USE_EXTERNAL_OLLAMA_HOST=true && export OLLAMA_HOST=http://192.168.29.229:11434 && mvn clean verify -Pintegration-tests -Dgpg.skip=true

|

||||

|

||||

doxygen:

|

||||

doxygen Doxyfile

|

||||

@echo "\033[0;34mGenerating documentation with Doxygen...\033[0m"

|

||||

@doxygen Doxyfile

|

||||

|

||||

javadoc:

|

||||

@echo "\033[0;34mGenerating Javadocs...\033[0m"

|

||||

@mvn clean javadoc:javadoc

|

||||

@if [ -f "target/reports/apidocs/index.html" ]; then \

|

||||

echo "\033[0;32mJavadocs generated in target/reports/apidocs/index.html\033[0m"; \

|

||||

else \

|

||||

echo "\033[0;31mFailed to generate Javadocs in target/reports/apidocs\033[0m"; \

|

||||

exit 1; \

|

||||

fi

|

||||

|

||||

list-releases:

|

||||

curl 'https://central.sonatype.com/api/internal/browse/component/versions?sortField=normalizedVersion&sortDirection=asc&page=0&size=12&filter=namespace%3Aio.github.amithkoujalgi%2Cname%3Aollama4j' \

|

||||

@echo "\033[0;34mListing latest releases...\033[0m"

|

||||

@curl 'https://central.sonatype.com/api/internal/browse/component/versions?sortField=normalizedVersion&sortDirection=desc&page=0&size=20&filter=namespace%3Aio.github.ollama4j%2Cname%3Aollama4j' \

|

||||

--compressed \

|

||||

--silent | jq '.components[].version'

|

||||

--silent | jq -r '.components[].version'

|

||||

|

||||

build-docs:

|

||||

npm i --prefix docs && npm run build --prefix docs

|

||||

docs-build:

|

||||

@echo "\033[0;34mBuilding documentation site...\033[0m"

|

||||

@cd ./docs && npm ci --no-audit --fund=false && npm run build

|

||||

|

||||

start-docs:

|

||||

npm i --prefix docs && npm run start --prefix docs

|

||||

docs-serve:

|

||||

@echo "\033[0;34mServing documentation site...\033[0m"

|

||||

@cd ./docs && npm install && npm run start

|

||||

|

||||

start-cpu:

|

||||

docker run -it -v ~/ollama:/root/.ollama -p 11434:11434 ollama/ollama

|

||||

@echo "\033[0;34mStarting Ollama (CPU mode)...\033[0m"

|

||||

@docker run -it -v ~/ollama:/root/.ollama -p 11434:11434 ollama/ollama

|

||||

|

||||

start-gpu:

|

||||

docker run -it --gpus=all -v ~/ollama:/root/.ollama -p 11434:11434 ollama/ollama

|

||||

@echo "\033[0;34mStarting Ollama (GPU mode)...\033[0m"

|

||||

@docker run -it --gpus=all -v ~/ollama:/root/.ollama -p 11434:11434 ollama/ollama

|

||||

267

README.md

@@ -1,28 +1,32 @@

|

||||

<div align="center">

|

||||

<img src='https://raw.githubusercontent.com/ollama4j/ollama4j/refs/heads/main/ollama4j-new.jpeg' width='200' alt="ollama4j-icon">

|

||||

|

||||

### Ollama4j

|

||||

|

||||

<p align="center">

|

||||

<img src='https://raw.githubusercontent.com/ollama4j/ollama4j/65a9d526150da8fcd98e2af6a164f055572bf722/ollama4j.jpeg' width='100' alt="ollama4j-icon">

|

||||

</p>

|

||||

|

||||

|

||||

A Java library (wrapper/binding) for [Ollama](https://ollama.ai/) server.

|

||||

|

||||

Find more details on the [website](https://ollama4j.github.io/ollama4j/).

|

||||

</div>

|

||||

|

||||

<div align="center">

|

||||

A Java library (wrapper/binding) for Ollama server.

|

||||

|

||||

_Find more details on the **[website](https://ollama4j.github.io/ollama4j/)**._

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

[](https://github.com/ollama4j/ollama4j/actions/workflows/run-tests.yml)

|

||||

|

||||

[](https://codecov.io/gh/ollama4j/ollama4j)

|

||||

</div>

|

||||

|

||||

|

||||

[//]: # ()

|

||||

|

||||

[//]: # ()

|

||||

|

||||

|

||||

[//]: # ()

|

||||

|

||||

[//]: # ()

|

||||

@@ -31,28 +35,60 @@ Find more details on the [website](https://ollama4j.github.io/ollama4j/).

|

||||

|

||||

[//]: # ()

|

||||

|

||||

|

||||

[](https://codecov.io/gh/ollama4j/ollama4j)

|

||||

|

||||

|

||||

</div>

|

||||

|

||||

[//]: # ()

|

||||

|

||||

[//]: # ()

|

||||

|

||||

## Table of Contents

|

||||

|

||||

- [Capabilities](#capabilities)

|

||||

- [How does it work?](#how-does-it-work)

|

||||

- [Requirements](#requirements)

|

||||

- [Installation](#installation)

|

||||

- [API Spec](https://ollama4j.github.io/ollama4j/category/apis---model-management)

|

||||

- [Javadoc](https://ollama4j.github.io/ollama4j/apidocs/)

|

||||

- [Usage](#usage)

|

||||

- [For Maven](#for-maven)

|

||||

- [Using Maven Central](#using-maven-central)

|

||||

- [Using GitHub's Maven Package Repository](#using-githubs-maven-package-repository)

|

||||

- [For Gradle](#for-gradle)

|

||||

- [API Spec](#api-spec)

|

||||

- [Examples](#examples)

|

||||

- [Development](#development)

|

||||

- [Contributions](#get-involved)

|

||||

- [References](#references)

|

||||

- [Setup dev environment](#setup-dev-environment)

|

||||

- [Build](#build)

|

||||

- [Run unit tests](#run-unit-tests)

|

||||

- [Run integration tests](#run-integration-tests)

|

||||

- [Releases](#releases)

|

||||

- [Get Involved](#get-involved)

|

||||

- [Who's using Ollama4j?](#whos-using-ollama4j)

|

||||

- [Growth](#growth)

|

||||

- [References](#references)

|

||||

- [Credits](#credits)

|

||||

- [Appreciate the work?](#appreciate-the-work)

|

||||

|

||||

#### How does it work?

|

||||

## Capabilities

|

||||

|

||||

- **Text generation**: Single-turn `generate` with optional streaming and advanced options

|

||||

- **Chat**: Multi-turn chat with conversation history and roles

|

||||

- **Tool/function calling**: Built-in tool invocation via annotations and tool specs

|

||||

- **Reasoning/thinking modes**: Generate and chat with “thinking” outputs where supported

|

||||

- **Image inputs (multimodal)**: Generate with images as inputs where models support vision

|

||||

- **Embeddings**: Create vector embeddings for text

|

||||

- **Async generation**: Fire-and-forget style generation APIs

|

||||

- **Custom roles**: Define and use custom chat roles

|

||||

- **Model management**: List, pull, create, delete, and get model details

|

||||

- **Connectivity utilities**: Server `ping` and process status (`ps`)

|

||||

- **Authentication**: Basic auth and bearer token support

|

||||

- **Options builder**: Type-safe builder for model parameters and request options

|

||||

- **Timeouts**: Configure connect/read/write timeouts

|

||||

- **Logging**: Built-in logging hooks for requests and responses

|

||||

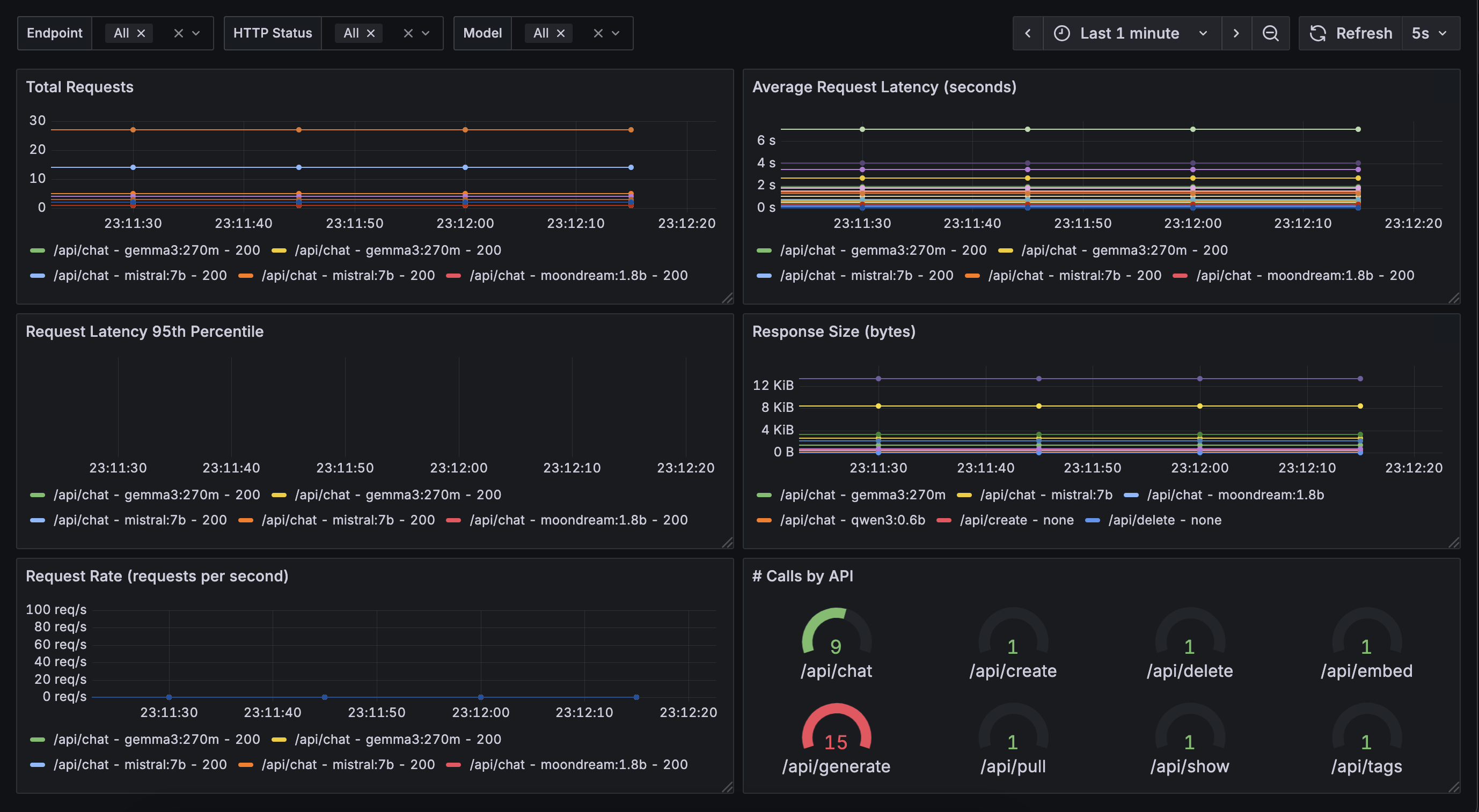

- **Metrics & Monitoring** 🆕: Built-in Prometheus metrics export for real-time monitoring of requests, model usage, and

|

||||

performance. *(Beta feature – feedback/contributions welcome!)* -

|

||||

Checkout [ollama4j-examples](https://github.com/ollama4j/ollama4j-examples) repository for details.

|

||||

|

||||

<div align="center">

|

||||

<img src='metrics.png' width='100%' alt="ollama4j-icon">

|

||||

</div>

|

||||

|

||||

## How does it work?

|

||||

|

||||

```mermaid

|

||||

flowchart LR

|

||||

@@ -66,71 +102,16 @@ Find more details on the [website](https://ollama4j.github.io/ollama4j/).

|

||||

end

|

||||

```

|

||||

|

||||

#### Requirements

|

||||

## Requirements

|

||||

|

||||

|

||||

<p align="center">

|

||||

<img src="https://img.shields.io/badge/Java-11%2B-green.svg?style=for-the-badge&labelColor=gray&label=Java&color=orange" alt="Java"/>

|

||||

<a href="https://ollama.com/" target="_blank">

|

||||

<img src="https://img.shields.io/badge/Ollama-0.11.10+-blue.svg?style=for-the-badge&labelColor=gray&label=Ollama&color=blue" alt="Ollama"/>

|

||||

</a>

|

||||

</p>

|

||||

|

||||

|

||||

<a href="https://ollama.com/" target="_blank">

|

||||

<img src="https://img.shields.io/badge/v0.3.0-green.svg?style=for-the-badge&labelColor=gray&label=Ollama&color=blue" alt=""/>

|

||||

</a>

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td>

|

||||

|

||||

<a href="https://ollama.ai/" target="_blank">Local Installation</a>

|

||||

|

||||

</td>

|

||||

|

||||

<td>

|

||||

|

||||

<a href="https://hub.docker.com/r/ollama/ollama" target="_blank">Docker Installation</a>

|

||||

|

||||

</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td>

|

||||

|

||||

<a href="https://ollama.com/download/Ollama-darwin.zip" target="_blank">Download for macOS</a>

|

||||

|

||||

<a href="https://ollama.com/download/OllamaSetup.exe" target="_blank">Download for Windows</a>

|

||||

|

||||

Install on Linux

|

||||

|

||||

```shell

|

||||

curl -fsSL https://ollama.com/install.sh | sh

|

||||

```

|

||||

|

||||

</td>

|

||||

<td>

|

||||

|

||||

|

||||

|

||||

CPU only

|

||||

|

||||

```shell

|

||||

docker run -d -p 11434:11434 \

|

||||

-v ollama:/root/.ollama \

|

||||

--name ollama \

|

||||

ollama/ollama

|

||||

```

|

||||

|

||||

NVIDIA GPU

|

||||

|

||||

```shell

|

||||

docker run -d -p 11434:11434 \

|

||||

--gpus=all \

|

||||

-v ollama:/root/.ollama \

|

||||

--name ollama \

|

||||

ollama/ollama

|

||||

```

|

||||

|

||||

</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

## Installation

|

||||

## Usage

|

||||

|

||||

> [!NOTE]

|

||||

> We are now publishing the artifacts to both Maven Central and GitHub package repositories.

|

||||

@@ -155,7 +136,7 @@ In your Maven project, add this dependency:

|

||||

<dependency>

|

||||

<groupId>io.github.ollama4j</groupId>

|

||||

<artifactId>ollama4j</artifactId>

|

||||

<version>1.0.79</version>

|

||||

<version>1.1.0</version>

|

||||

</dependency>

|

||||

```

|

||||

|

||||

@@ -211,7 +192,7 @@ In your Maven project, add this dependency:

|

||||

<dependency>

|

||||

<groupId>io.github.ollama4j</groupId>

|

||||

<artifactId>ollama4j</artifactId>

|

||||

<version>1.0.79</version>

|

||||

<version>1.1.0</version>

|

||||

</dependency>

|

||||

```

|

||||

|

||||

@@ -221,7 +202,7 @@ In your Maven project, add this dependency:

|

||||

|

||||

```groovy

|

||||

dependencies {

|

||||

implementation 'io.github.ollama4j:ollama4j:1.0.79'

|

||||

implementation 'io.github.ollama4j:ollama4j:1.1.0'

|

||||

}

|

||||

```

|

||||

|

||||

@@ -239,56 +220,69 @@ dependencies {

|

||||

|

||||

[lib-shield]: https://img.shields.io/badge/ollama4j-get_latest_version-blue.svg?style=just-the-message&labelColor=gray

|

||||

|

||||

#### API Spec

|

||||

### API Spec

|

||||

|

||||

> [!TIP]

|

||||

> Find the full API specifications on the [website](https://ollama4j.github.io/ollama4j/).

|

||||

|

||||

#### Development

|

||||

## Examples

|

||||

|

||||

Build:

|

||||

For practical examples and usage patterns of the Ollama4j library, check out

|

||||

the [ollama4j-examples](https://github.com/ollama4j/ollama4j-examples) repository.

|

||||

|

||||

## Development

|

||||

|

||||

Make sure you have `pre-commit` installed.

|

||||

|

||||

With `brew`:

|

||||

|

||||

```shell

|

||||

brew install pre-commit

|

||||

```

|

||||

|

||||

With `pip`:

|

||||

|

||||

```shell

|

||||

pip install pre-commit

|

||||

```

|

||||

|

||||

#### Setup dev environment

|

||||

|

||||

> **Note**

|

||||

> If you're on Windows, install [Chocolatey Package Manager for Windows](https://chocolatey.org/install) and then

|

||||

> install `make` by running `choco install make`. Just a little tip - run the command with administrator privileges if

|

||||

> installation faiils.

|

||||

|

||||

```shell

|

||||

make dev

|

||||

```

|

||||

|

||||

#### Build

|

||||

|

||||

```shell

|

||||

make build

|

||||

```

|

||||

|

||||

Run unit tests:

|

||||

#### Run unit tests

|

||||

|

||||

```shell

|

||||

make unit-tests

|

||||

```

|

||||

|

||||

Run integration tests:

|

||||

#### Run integration tests

|

||||

|

||||

Make sure you have Docker running as this uses [testcontainers](https://testcontainers.com/) to run the integration

|

||||

tests on Ollama Docker container.

|

||||

|

||||

```shell

|

||||

make integration-tests

|

||||

```

|

||||

|

||||

#### Releases

|

||||

### Releases

|

||||

|

||||

Newer artifacts are published via GitHub Actions CI workflow when a new release is created from `main` branch.

|

||||

|

||||

#### Who's using Ollama4j?

|

||||

|

||||

- `Datafaker`: a library to generate fake data

|

||||

- https://github.com/datafaker-net/datafaker-experimental/tree/main/ollama-api

|

||||

- `Vaadin Web UI`: UI-Tester for Interactions with Ollama via ollama4j

|

||||

- https://github.com/TEAMPB/ollama4j-vaadin-ui

|

||||

- `ollama-translator`: Minecraft 1.20.6 spigot plugin allows to easily break language barriers by using ollama on the

|

||||

server to translate all messages into a specfic target language.

|

||||

- https://github.com/liebki/ollama-translator

|

||||

- https://www.reddit.com/r/fabricmc/comments/1e65x5s/comment/ldr2vcf/

|

||||

- `Ollama4j Web UI`: A web UI for Ollama written in Java using Spring Boot and Vaadin framework and

|

||||

Ollama4j.

|

||||

- https://github.com/ollama4j/ollama4j-web-ui

|

||||

- `JnsCLI`: A command-line tool for Jenkins that manages jobs, builds, and configurations directly from the terminal while offering AI-powered error analysis for quick troubleshooting.

|

||||

- https://github.com/mirum8/jnscli

|

||||

|

||||

#### Traction

|

||||

|

||||

[](https://star-history.com/#ollama4j/ollama4j&Date)

|

||||

|

||||

### Get Involved

|

||||

## Get Involved

|

||||

|

||||

<div align="center">

|

||||

|

||||

@@ -300,6 +294,40 @@ Newer artifacts are published via GitHub Actions CI workflow when a new release

|

||||

|

||||

</div>

|

||||

|

||||

Contributions are most welcome! Whether it's reporting a bug, proposing an enhancement, or helping

|

||||

with code - any sort of contribution is much appreciated.

|

||||

|

||||

<div style="font-size: 15px; font-weight: bold; padding-top: 10px; padding-bottom: 10px; border: 1px solid" align="center">

|

||||

If you like or are use this project, please give us a ⭐. It's a free way to show your support.

|

||||

</div>

|

||||

|

||||

## Who's using Ollama4j?

|

||||

|

||||

| # | Project Name | Description | Link |

|

||||

|----|-------------------|--------------------------------------------------------------------------------------------------------------------------------------------------------------------|-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

||||

| 1 | Datafaker | A library to generate fake data | [GitHub](https://github.com/datafaker-net/datafaker-experimental/tree/main/ollama-api) |

|

||||

| 2 | Vaadin Web UI | UI-Tester for interactions with Ollama via ollama4j | [GitHub](https://github.com/TEAMPB/ollama4j-vaadin-ui) |

|

||||

| 3 | ollama-translator | A Minecraft 1.20.6 Spigot plugin that translates all messages into a specific target language via Ollama | [GitHub](https://github.com/liebki/ollama-translator) |

|

||||