mirror of

https://github.com/amithkoujalgi/ollama4j.git

synced 2025-10-14 01:18:58 +02:00

Compare commits

No commits in common. "main" and "1.1.1" have entirely different histories.

@ -1,5 +1,5 @@

|

||||

<div align="center">

|

||||

<img src='https://raw.githubusercontent.com/ollama4j/ollama4j/refs/heads/main/ollama4j-new.jpeg' width='200' alt="ollama4j-icon">

|

||||

<img src='https://raw.githubusercontent.com/ollama4j/ollama4j/65a9d526150da8fcd98e2af6a164f055572bf722/ollama4j.jpeg' width='100' alt="ollama4j-icon">

|

||||

|

||||

### Ollama4j

|

||||

|

||||

|

||||

@ -1,90 +0,0 @@

|

||||

---

|

||||

sidebar_position: 5

|

||||

|

||||

title: Metrics

|

||||

---

|

||||

|

||||

import CodeEmbed from '@site/src/components/CodeEmbed';

|

||||

|

||||

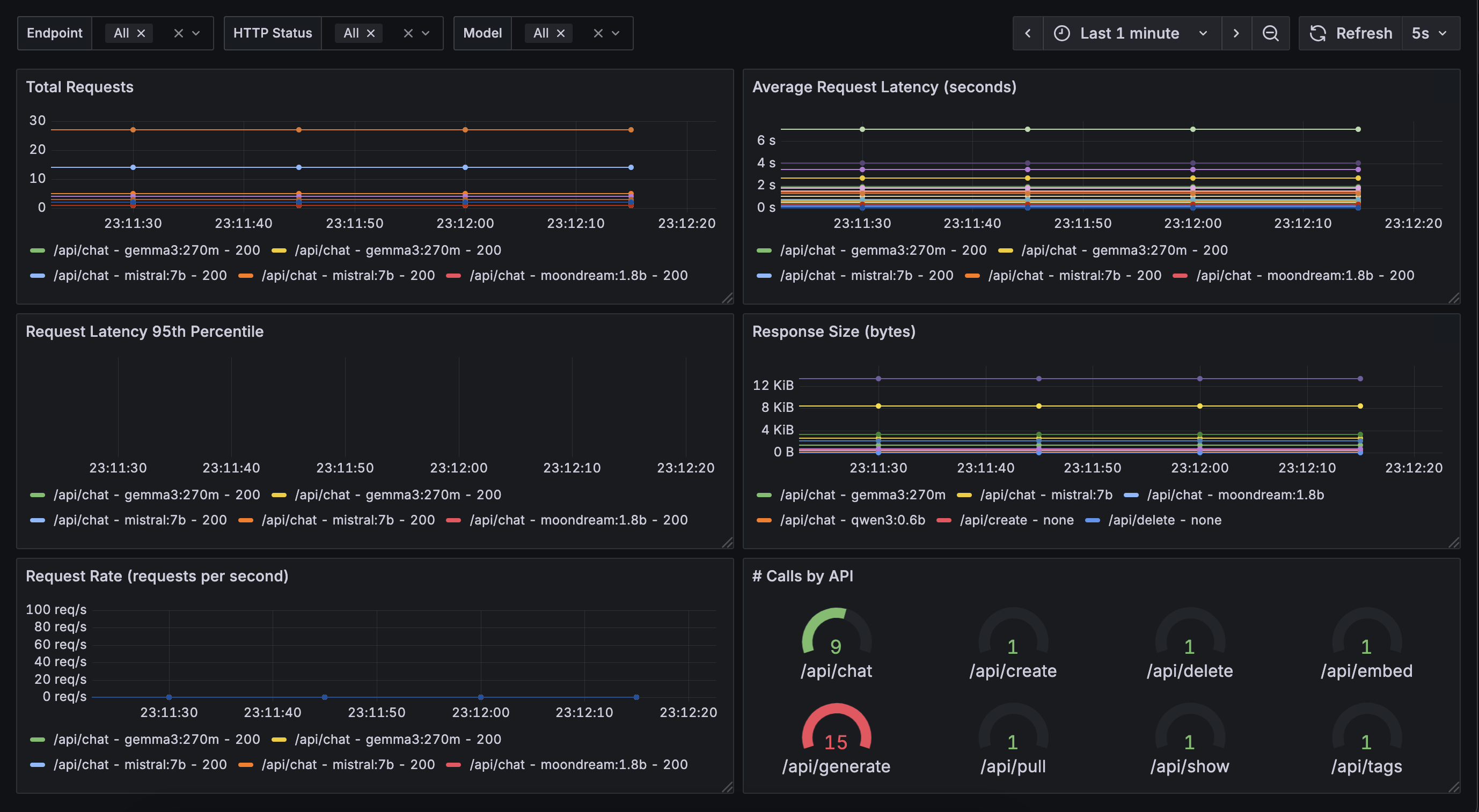

# Metrics

|

||||

|

||||

:::warning[Note]

|

||||

This is work in progress

|

||||

:::

|

||||

|

||||

Monitoring and understanding the performance of your models and requests is crucial for optimizing and maintaining your

|

||||

applications. The Ollama4j library provides built-in support for collecting and exposing various metrics, such as

|

||||

request counts, response times, and error rates. These metrics can help you:

|

||||

|

||||

- Track usage patterns and identify bottlenecks

|

||||

- Monitor the health and reliability of your services

|

||||

- Set up alerts for abnormal behavior

|

||||

- Gain insights for scaling and optimization

|

||||

|

||||

## Available Metrics

|

||||

|

||||

Ollama4j exposes several key metrics, including:

|

||||

|

||||

- **Total Requests**: The number of requests processed by the model.

|

||||

- **Response Time**: The time taken to generate a response for each request.

|

||||

- **Error Rate**: The percentage of requests that resulted in errors.

|

||||

- **Active Sessions**: The number of concurrent sessions or users.

|

||||

|

||||

These metrics can be accessed programmatically or integrated with monitoring tools such as Prometheus or Grafana for

|

||||

visualization and alerting.

|

||||

|

||||

## Example Metrics Dashboard

|

||||

|

||||

Below is an example of a metrics dashboard visualizing some of these key statistics:

|

||||

|

||||

|

||||

|

||||

## Example: Accessing Metrics in Java

|

||||

|

||||

You can easily access and display metrics in your Java application using Ollama4j.

|

||||

|

||||

Make sure you have added the `simpleclient_httpserver` dependency in your app for the app to be able to expose the

|

||||

metrics via `/metrics` endpoint:

|

||||

|

||||

```xml

|

||||

|

||||

<dependency>

|

||||

<groupId>io.prometheus</groupId>

|

||||

<artifactId>simpleclient_httpserver</artifactId>

|

||||

<version>0.16.0</version>

|

||||

</dependency>

|

||||

```

|

||||

|

||||

Here is a sample code snippet demonstrating how to retrieve and print metrics on Grafana:

|

||||

|

||||

<CodeEmbed src="https://raw.githubusercontent.com/ollama4j/ollama4j-examples/refs/heads/main/src/main/java/io/github/ollama4j/examples/MetricsExample.java" />

|

||||

|

||||

This will start a simple HTTP server with `/metrics` endpoint enabled. Metrics will now available

|

||||

at: http://localhost:8080/metrics

|

||||

|

||||

## Integrating with Monitoring Tools

|

||||

|

||||

### Grafana

|

||||

|

||||

Use the following sample `docker-compose` file to host a basic Grafana container.

|

||||

|

||||

<CodeEmbed src="https://raw.githubusercontent.com/ollama4j/ollama4j-examples/refs/heads/main/docker/docker-compose.yml" />

|

||||

|

||||

And run:

|

||||

|

||||

```shell

|

||||

docker-compose -f path/to/your/docker-compose.yml up

|

||||

```

|

||||

|

||||

This starts Granfana at http://localhost:3000

|

||||

|

||||

|

||||

[//]: # (To integrate Ollama4j metrics with external monitoring systems, you can export the metrics endpoint and configure your)

|

||||

|

||||

[//]: # (monitoring tool to scrape or collect the data. Refer to the [integration guide](../integration/monitoring.md) for)

|

||||

|

||||

[//]: # (detailed instructions.)

|

||||

|

||||

[//]: # ()

|

||||

|

||||

[//]: # (For more information on customizing and extending metrics, see the [API documentation](../api/metrics.md).)

|

||||

26

docs/package-lock.json

generated

26

docs/package-lock.json

generated

@ -18,8 +18,8 @@

|

||||

"clsx": "^2.1.1",

|

||||

"font-awesome": "^4.7.0",

|

||||

"prism-react-renderer": "^2.4.1",

|

||||

"react": "^19.2.0",

|

||||

"react-dom": "^19.2.0",

|

||||

"react": "^19.1.1",

|

||||

"react-dom": "^19.1.1",

|

||||

"react-icons": "^5.5.0",

|

||||

"react-image-gallery": "^1.4.0"

|

||||

},

|

||||

@ -15834,24 +15834,24 @@

|

||||

}

|

||||

},

|

||||

"node_modules/react": {

|

||||

"version": "19.2.0",

|

||||

"resolved": "https://registry.npmjs.org/react/-/react-19.2.0.tgz",

|

||||

"integrity": "sha512-tmbWg6W31tQLeB5cdIBOicJDJRR2KzXsV7uSK9iNfLWQ5bIZfxuPEHp7M8wiHyHnn0DD1i7w3Zmin0FtkrwoCQ==",

|

||||

"version": "19.1.1",

|

||||

"resolved": "https://registry.npmjs.org/react/-/react-19.1.1.tgz",

|

||||

"integrity": "sha512-w8nqGImo45dmMIfljjMwOGtbmC/mk4CMYhWIicdSflH91J9TyCyczcPFXJzrZ/ZXcgGRFeP6BU0BEJTw6tZdfQ==",

|

||||

"license": "MIT",

|

||||

"engines": {

|

||||

"node": ">=0.10.0"

|

||||

}

|

||||

},

|

||||

"node_modules/react-dom": {

|

||||

"version": "19.2.0",

|

||||

"resolved": "https://registry.npmjs.org/react-dom/-/react-dom-19.2.0.tgz",

|

||||

"integrity": "sha512-UlbRu4cAiGaIewkPyiRGJk0imDN2T3JjieT6spoL2UeSf5od4n5LB/mQ4ejmxhCFT1tYe8IvaFulzynWovsEFQ==",

|

||||

"version": "19.1.1",

|

||||

"resolved": "https://registry.npmjs.org/react-dom/-/react-dom-19.1.1.tgz",

|

||||

"integrity": "sha512-Dlq/5LAZgF0Gaz6yiqZCf6VCcZs1ghAJyrsu84Q/GT0gV+mCxbfmKNoGRKBYMJ8IEdGPqu49YWXD02GCknEDkw==",

|

||||

"license": "MIT",

|

||||

"dependencies": {

|

||||

"scheduler": "^0.27.0"

|

||||

"scheduler": "^0.26.0"

|

||||

},

|

||||

"peerDependencies": {

|

||||

"react": "^19.2.0"

|

||||

"react": "^19.1.1"

|

||||

}

|

||||

},

|

||||

"node_modules/react-fast-compare": {

|

||||

@ -16551,9 +16551,9 @@

|

||||

"license": "ISC"

|

||||

},

|

||||

"node_modules/scheduler": {

|

||||

"version": "0.27.0",

|

||||

"resolved": "https://registry.npmjs.org/scheduler/-/scheduler-0.27.0.tgz",

|

||||

"integrity": "sha512-eNv+WrVbKu1f3vbYJT/xtiF5syA5HPIMtf9IgY/nKg0sWqzAUEvqY/xm7OcZc/qafLx/iO9FgOmeSAp4v5ti/Q==",

|

||||

"version": "0.26.0",

|

||||

"resolved": "https://registry.npmjs.org/scheduler/-/scheduler-0.26.0.tgz",

|

||||

"integrity": "sha512-NlHwttCI/l5gCPR3D1nNXtWABUmBwvZpEQiD4IXSbIDq8BzLIK/7Ir5gTFSGZDUu37K5cMNp0hFtzO38sC7gWA==",

|

||||

"license": "MIT"

|

||||

},

|

||||

"node_modules/schema-dts": {

|

||||

|

||||

@ -24,8 +24,8 @@

|

||||

"clsx": "^2.1.1",

|

||||

"font-awesome": "^4.7.0",

|

||||

"prism-react-renderer": "^2.4.1",

|

||||

"react": "^19.2.0",

|

||||

"react-dom": "^19.2.0",

|

||||

"react": "^19.1.1",

|

||||

"react-dom": "^19.1.1",

|

||||

"react-icons": "^5.5.0",

|

||||

"react-image-gallery": "^1.4.0"

|

||||

},

|

||||

|

||||

Binary file not shown.

|

Before Width: | Height: | Size: 67 KiB |

@ -70,14 +70,10 @@ public class Ollama {

|

||||

*/

|

||||

@Setter private long requestTimeoutSeconds = 10;

|

||||

|

||||

/**

|

||||

* The read timeout in seconds for image URLs.

|

||||

*/

|

||||

/** The read timeout in seconds for image URLs. */

|

||||

@Setter private int imageURLReadTimeoutSeconds = 10;

|

||||

|

||||

/**

|

||||

* The connect timeout in seconds for image URLs.

|

||||

*/

|

||||

/** The connect timeout in seconds for image URLs. */

|

||||

@Setter private int imageURLConnectTimeoutSeconds = 10;

|

||||

|

||||

/**

|

||||

@ -284,9 +280,9 @@ public class Ollama {

|

||||

/**

|

||||

* Handles retry backoff for pullModel.

|

||||

*

|

||||

* @param modelName the name of the model being pulled

|

||||

* @param currentRetry the current retry attempt (zero-based)

|

||||

* @param maxRetries the maximum number of retries allowed

|

||||

* @param modelName the name of the model being pulled

|

||||

* @param currentRetry the current retry attempt (zero-based)

|

||||

* @param maxRetries the maximum number of retries allowed

|

||||

* @param baseDelayMillis the base delay in milliseconds for exponential backoff

|

||||

* @throws InterruptedException if the thread is interrupted during sleep

|

||||

*/

|

||||

@ -380,7 +376,7 @@ public class Ollama {

|

||||

* Returns true if the response indicates a successful pull.

|

||||

*

|

||||

* @param modelPullResponse the response from the model pull

|

||||

* @param modelName the name of the model

|

||||

* @param modelName the name of the model

|

||||

* @return true if the pull was successful, false otherwise

|

||||

* @throws OllamaException if the response contains an error

|

||||

*/

|

||||

@ -605,7 +601,7 @@ public class Ollama {

|

||||

/**

|

||||

* Deletes a model from the Ollama server.

|

||||

*

|

||||

* @param modelName the name of the model to be deleted

|

||||

* @param modelName the name of the model to be deleted

|

||||

* @param ignoreIfNotPresent ignore errors if the specified model is not present on the Ollama server

|

||||

* @throws OllamaException if the response indicates an error status

|

||||

*/

|

||||

@ -762,7 +758,7 @@ public class Ollama {

|

||||

* Generates a response from a model using the specified parameters and stream observer.

|

||||

* If {@code streamObserver} is provided, streaming is enabled; otherwise, a synchronous call is made.

|

||||

*

|

||||

* @param request the generation request

|

||||

* @param request the generation request

|

||||

* @param streamObserver the stream observer for streaming responses, or null for synchronous

|

||||

* @return the result of the generation

|

||||

* @throws OllamaException if the request fails

|

||||

@ -827,10 +823,10 @@ public class Ollama {

|

||||

/**

|

||||

* Generates a response from a model asynchronously, returning a streamer for results.

|

||||

*

|

||||

* @param model the model name

|

||||

* @param model the model name

|

||||

* @param prompt the prompt to send

|

||||

* @param raw whether to use raw mode

|

||||

* @param think whether to use "think" mode

|

||||

* @param raw whether to use raw mode

|

||||

* @param think whether to use "think" mode

|

||||

* @return an OllamaAsyncResultStreamer for streaming results

|

||||

* @throws OllamaException if the request fails

|

||||

*/

|

||||

@ -865,9 +861,9 @@ public class Ollama {

|

||||

*

|

||||

* <p>Note: the OllamaChatRequestModel#getStream() property is not implemented.

|

||||

*

|

||||

* @param request request object to be sent to the server

|

||||

* @param request request object to be sent to the server

|

||||

* @param tokenHandler callback handler to handle the last token from stream (caution: the

|

||||

* previous tokens from stream will not be concatenated)

|

||||

* previous tokens from stream will not be concatenated)

|

||||

* @return {@link OllamaChatResult}

|

||||

* @throws OllamaException if the response indicates an error status

|

||||

*/

|

||||

@ -962,16 +958,12 @@ public class Ollama {

|

||||

* Registers multiple tools in the tool registry.

|

||||

*

|

||||

* @param tools a list of {@link Tools.Tool} objects to register. Each tool contains its

|

||||

* specification and function.

|

||||

* specification and function.

|

||||

*/

|

||||

public void registerTools(List<Tools.Tool> tools) {

|

||||

toolRegistry.addTools(tools);

|

||||

}

|

||||

|

||||

public List<Tools.Tool> getRegisteredTools() {

|

||||

return toolRegistry.getRegisteredTools();

|

||||

}

|

||||

|

||||

/**

|

||||

* Deregisters all tools from the tool registry. This method removes all registered tools,

|

||||

* effectively clearing the registry.

|

||||

@ -987,7 +979,7 @@ public class Ollama {

|

||||

* and recursively registers annotated tools from all the providers specified in the annotation.

|

||||

*

|

||||

* @throws OllamaException if the caller's class is not annotated with {@link

|

||||

* OllamaToolService} or if reflection-based instantiation or invocation fails

|

||||

* OllamaToolService} or if reflection-based instantiation or invocation fails

|

||||

*/

|

||||

public void registerAnnotatedTools() throws OllamaException {

|

||||

try {

|

||||

@ -1135,7 +1127,7 @@ public class Ollama {

|

||||

* This method synchronously calls the Ollama API. If a stream handler is provided,

|

||||

* the request will be streamed; otherwise, a regular synchronous request will be made.

|

||||

*

|

||||

* @param ollamaRequestModel the request model containing necessary parameters for the Ollama API request

|

||||

* @param ollamaRequestModel the request model containing necessary parameters for the Ollama API request

|

||||

* @param thinkingStreamHandler the stream handler for "thinking" tokens, or null if not used

|

||||

* @param responseStreamHandler the stream handler to process streaming responses, or null for non-streaming requests

|

||||

* @return the result of the Ollama API request

|

||||

|

||||

@ -11,9 +11,6 @@ package io.github.ollama4j.models.chat;

|

||||

import io.github.ollama4j.models.request.OllamaCommonRequest;

|

||||

import io.github.ollama4j.tools.Tools;

|

||||

import io.github.ollama4j.utils.OllamaRequestBody;

|

||||

import io.github.ollama4j.utils.Options;

|

||||

import java.io.File;

|

||||

import java.util.ArrayList;

|

||||

import java.util.Collections;

|

||||

import java.util.List;

|

||||

import lombok.Getter;

|

||||

@ -23,8 +20,8 @@ import lombok.Setter;

|

||||

* Defines a Request to use against the ollama /api/chat endpoint.

|

||||

*

|

||||

* @see <a href=

|

||||

* "https://github.com/ollama/ollama/blob/main/docs/api.md#generate-a-chat-completion">Generate

|

||||

* Chat Completion</a>

|

||||

* "https://github.com/ollama/ollama/blob/main/docs/api.md#generate-a-chat-completion">Generate

|

||||

* Chat Completion</a>

|

||||

*/

|

||||

@Getter

|

||||

@Setter

|

||||

@ -39,15 +36,11 @@ public class OllamaChatRequest extends OllamaCommonRequest implements OllamaRequ

|

||||

/**

|

||||

* Controls whether tools are automatically executed.

|

||||

*

|

||||

* <p>

|

||||

* If set to {@code true} (the default), tools will be automatically

|

||||

* used/applied by the

|

||||

* library. If set to {@code false}, tool calls will be returned to the client

|

||||

* for manual

|

||||

* <p>If set to {@code true} (the default), tools will be automatically used/applied by the

|

||||

* library. If set to {@code false}, tool calls will be returned to the client for manual

|

||||

* handling.

|

||||

*

|

||||

* <p>

|

||||

* Disabling this should be an explicit operation.

|

||||

* <p>Disabling this should be an explicit operation.

|

||||

*/

|

||||

private boolean useTools = true;

|

||||

|

||||

@ -64,116 +57,7 @@ public class OllamaChatRequest extends OllamaCommonRequest implements OllamaRequ

|

||||

if (!(o instanceof OllamaChatRequest)) {

|

||||

return false;

|

||||

}

|

||||

|

||||

return this.toString().equals(o.toString());

|

||||

}

|

||||

|

||||

// --- Builder-like fluent API methods ---

|

||||

|

||||

public static OllamaChatRequest builder() {

|

||||

OllamaChatRequest req = new OllamaChatRequest();

|

||||

req.setMessages(new ArrayList<>());

|

||||

return req;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withModel(String model) {

|

||||

this.setModel(model);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withMessage(OllamaChatMessageRole role, String content) {

|

||||

return withMessage(role, content, Collections.emptyList());

|

||||

}

|

||||

|

||||

public OllamaChatRequest withMessage(

|

||||

OllamaChatMessageRole role, String content, List<OllamaChatToolCalls> toolCalls) {

|

||||

if (this.messages == null || this.messages == Collections.EMPTY_LIST) {

|

||||

this.messages = new ArrayList<>();

|

||||

}

|

||||

this.messages.add(new OllamaChatMessage(role, content, null, toolCalls, null));

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withMessage(

|

||||

OllamaChatMessageRole role,

|

||||

String content,

|

||||

List<OllamaChatToolCalls> toolCalls,

|

||||

List<File> images) {

|

||||

if (this.messages == null || this.messages == Collections.EMPTY_LIST) {

|

||||

this.messages = new ArrayList<>();

|

||||

}

|

||||

|

||||

List<byte[]> imagesAsBytes = new ArrayList<>();

|

||||

if (images != null) {

|

||||

for (File image : images) {

|

||||

try {

|

||||

imagesAsBytes.add(java.nio.file.Files.readAllBytes(image.toPath()));

|

||||

} catch (java.io.IOException e) {

|

||||

throw new RuntimeException(

|

||||

"Failed to read image file: " + image.getAbsolutePath(), e);

|

||||

}

|

||||

}

|

||||

}

|

||||

this.messages.add(new OllamaChatMessage(role, content, null, toolCalls, imagesAsBytes));

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withMessages(List<OllamaChatMessage> messages) {

|

||||

this.setMessages(messages);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withOptions(Options options) {

|

||||

if (options != null) {

|

||||

this.setOptions(options.getOptionsMap());

|

||||

}

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withGetJsonResponse() {

|

||||

this.setFormat("json");

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withTemplate(String template) {

|

||||

this.setTemplate(template);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withStreaming() {

|

||||

this.setStream(true);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withKeepAlive(String keepAlive) {

|

||||

this.setKeepAlive(keepAlive);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withThinking(boolean think) {

|

||||

this.setThink(think);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withUseTools(boolean useTools) {

|

||||

this.setUseTools(useTools);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest withTools(List<Tools.Tool> tools) {

|

||||

this.setTools(tools);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest build() {

|

||||

return this;

|

||||

}

|

||||

|

||||

public void reset() {

|

||||

// Only clear the messages, keep model and think as is

|

||||

if (this.messages == null || this.messages == Collections.EMPTY_LIST) {

|

||||

this.messages = new ArrayList<>();

|

||||

} else {

|

||||

this.messages.clear();

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@ -0,0 +1,176 @@

|

||||

/*

|

||||

* Ollama4j - Java library for interacting with Ollama server.

|

||||

* Copyright (c) 2025 Amith Koujalgi and contributors.

|

||||

*

|

||||

* Licensed under the MIT License (the "License");

|

||||

* you may not use this file except in compliance with the License.

|

||||

*

|

||||

*/

|

||||

package io.github.ollama4j.models.chat;

|

||||

|

||||

import io.github.ollama4j.utils.Options;

|

||||

import io.github.ollama4j.utils.Utils;

|

||||

import java.io.File;

|

||||

import java.io.IOException;

|

||||

import java.nio.file.Files;

|

||||

import java.util.ArrayList;

|

||||

import java.util.Collections;

|

||||

import java.util.List;

|

||||

import java.util.stream.Collectors;

|

||||

import lombok.Setter;

|

||||

import org.slf4j.Logger;

|

||||

import org.slf4j.LoggerFactory;

|

||||

|

||||

/** Helper class for creating {@link OllamaChatRequest} objects using the builder-pattern. */

|

||||

public class OllamaChatRequestBuilder {

|

||||

|

||||

private static final Logger LOG = LoggerFactory.getLogger(OllamaChatRequestBuilder.class);

|

||||

|

||||

private int imageURLConnectTimeoutSeconds = 10;

|

||||

private int imageURLReadTimeoutSeconds = 10;

|

||||

private OllamaChatRequest request;

|

||||

@Setter private boolean useTools = true;

|

||||

|

||||

private OllamaChatRequestBuilder() {

|

||||

request = new OllamaChatRequest();

|

||||

request.setMessages(new ArrayList<>());

|

||||

}

|

||||

|

||||

public static OllamaChatRequestBuilder builder() {

|

||||

return new OllamaChatRequestBuilder();

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withImageURLConnectTimeoutSeconds(

|

||||

int imageURLConnectTimeoutSeconds) {

|

||||

this.imageURLConnectTimeoutSeconds = imageURLConnectTimeoutSeconds;

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withImageURLReadTimeoutSeconds(int imageURLReadTimeoutSeconds) {

|

||||

this.imageURLReadTimeoutSeconds = imageURLReadTimeoutSeconds;

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withModel(String model) {

|

||||

request.setModel(model);

|

||||

return this;

|

||||

}

|

||||

|

||||

public void reset() {

|

||||

request = new OllamaChatRequest(request.getModel(), request.isThink(), new ArrayList<>());

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withMessage(OllamaChatMessageRole role, String content) {

|

||||

return withMessage(role, content, Collections.emptyList());

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withMessage(

|

||||

OllamaChatMessageRole role, String content, List<OllamaChatToolCalls> toolCalls) {

|

||||

List<OllamaChatMessage> messages = this.request.getMessages();

|

||||

messages.add(new OllamaChatMessage(role, content, null, toolCalls, null));

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withMessage(

|

||||

OllamaChatMessageRole role,

|

||||

String content,

|

||||

List<OllamaChatToolCalls> toolCalls,

|

||||

List<File> images) {

|

||||

List<OllamaChatMessage> messages = this.request.getMessages();

|

||||

List<byte[]> binaryImages =

|

||||

images.stream()

|

||||

.map(

|

||||

file -> {

|

||||

try {

|

||||

return Files.readAllBytes(file.toPath());

|

||||

} catch (IOException e) {

|

||||

LOG.warn(

|

||||

"File '{}' could not be accessed, will not add to"

|

||||

+ " message!",

|

||||

file.toPath(),

|

||||

e);

|

||||

return new byte[0];

|

||||

}

|

||||

})

|

||||

.collect(Collectors.toList());

|

||||

messages.add(new OllamaChatMessage(role, content, null, toolCalls, binaryImages));

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withMessage(

|

||||

OllamaChatMessageRole role,

|

||||

String content,

|

||||

List<OllamaChatToolCalls> toolCalls,

|

||||

String... imageUrls)

|

||||

throws IOException, InterruptedException {

|

||||

List<OllamaChatMessage> messages = this.request.getMessages();

|

||||

List<byte[]> binaryImages = null;

|

||||

if (imageUrls.length > 0) {

|

||||

binaryImages = new ArrayList<>();

|

||||

for (String imageUrl : imageUrls) {

|

||||

try {

|

||||

binaryImages.add(

|

||||

Utils.loadImageBytesFromUrl(

|

||||

imageUrl,

|

||||

imageURLConnectTimeoutSeconds,

|

||||

imageURLReadTimeoutSeconds));

|

||||

} catch (InterruptedException e) {

|

||||

LOG.error("Failed to load image from URL: '{}'. Cause: {}", imageUrl, e);

|

||||

Thread.currentThread().interrupt();

|

||||

throw new InterruptedException(

|

||||

"Interrupted while loading image from URL: " + imageUrl);

|

||||

} catch (IOException e) {

|

||||

LOG.error(

|

||||

"IOException occurred while loading image from URL '{}'. Cause: {}",

|

||||

imageUrl,

|

||||

e.getMessage(),

|

||||

e);

|

||||

throw new IOException(

|

||||

"IOException while loading image from URL: " + imageUrl, e);

|

||||

}

|

||||

}

|

||||

}

|

||||

messages.add(new OllamaChatMessage(role, content, null, toolCalls, binaryImages));

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withMessages(List<OllamaChatMessage> messages) {

|

||||

request.setMessages(messages);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withOptions(Options options) {

|

||||

this.request.setOptions(options.getOptionsMap());

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withGetJsonResponse() {

|

||||

this.request.setFormat("json");

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withTemplate(String template) {

|

||||

this.request.setTemplate(template);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withStreaming() {

|

||||

this.request.setStream(true);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withKeepAlive(String keepAlive) {

|

||||

this.request.setKeepAlive(keepAlive);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequestBuilder withThinking(boolean think) {

|

||||

this.request.setThink(think);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaChatRequest build() {

|

||||

request.setUseTools(useTools);

|

||||

return request;

|

||||

}

|

||||

}

|

||||

@ -11,14 +11,7 @@ package io.github.ollama4j.models.generate;

|

||||

import io.github.ollama4j.models.request.OllamaCommonRequest;

|

||||

import io.github.ollama4j.tools.Tools;

|

||||

import io.github.ollama4j.utils.OllamaRequestBody;

|

||||

import io.github.ollama4j.utils.Options;

|

||||

import java.io.File;

|

||||

import java.io.IOException;

|

||||

import java.nio.file.Files;

|

||||

import java.util.ArrayList;

|

||||

import java.util.Base64;

|

||||

import java.util.List;

|

||||

import java.util.Map;

|

||||

import lombok.Getter;

|

||||

import lombok.Setter;

|

||||

|

||||

@ -48,100 +41,6 @@ public class OllamaGenerateRequest extends OllamaCommonRequest implements Ollama

|

||||

this.images = images;

|

||||

}

|

||||

|

||||

// --- Builder-style methods ---

|

||||

|

||||

public static OllamaGenerateRequest builder() {

|

||||

return new OllamaGenerateRequest();

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withPrompt(String prompt) {

|

||||

this.setPrompt(prompt);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withTools(List<Tools.Tool> tools) {

|

||||

this.setTools(tools);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withModel(String model) {

|

||||

this.setModel(model);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withGetJsonResponse() {

|

||||

this.setFormat("json");

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withOptions(Options options) {

|

||||

this.setOptions(options.getOptionsMap());

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withTemplate(String template) {

|

||||

this.setTemplate(template);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withStreaming(boolean streaming) {

|

||||

this.setStream(streaming);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withKeepAlive(String keepAlive) {

|

||||

this.setKeepAlive(keepAlive);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withRaw(boolean raw) {

|

||||

this.setRaw(raw);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withThink(boolean think) {

|

||||

this.setThink(think);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withUseTools(boolean useTools) {

|

||||

this.setUseTools(useTools);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withFormat(Map<String, Object> format) {

|

||||

this.setFormat(format);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withSystem(String system) {

|

||||

this.setSystem(system);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withContext(String context) {

|

||||

this.setContext(context);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withImagesBase64(List<String> images) {

|

||||

this.setImages(images);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest withImages(List<File> imageFiles) throws IOException {

|

||||

List<String> images = new ArrayList<>();

|

||||

for (File imageFile : imageFiles) {

|

||||

images.add(Base64.getEncoder().encodeToString(Files.readAllBytes(imageFile.toPath())));

|

||||

}

|

||||

this.setImages(images);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest build() {

|

||||

return this;

|

||||

}

|

||||

|

||||

@Override

|

||||

public boolean equals(Object o) {

|

||||

if (!(o instanceof OllamaGenerateRequest)) {

|

||||

|

||||

@ -0,0 +1,121 @@

|

||||

/*

|

||||

* Ollama4j - Java library for interacting with Ollama server.

|

||||

* Copyright (c) 2025 Amith Koujalgi and contributors.

|

||||

*

|

||||

* Licensed under the MIT License (the "License");

|

||||

* you may not use this file except in compliance with the License.

|

||||

*

|

||||

*/

|

||||

package io.github.ollama4j.models.generate;

|

||||

|

||||

import io.github.ollama4j.tools.Tools;

|

||||

import io.github.ollama4j.utils.Options;

|

||||

import java.io.File;

|

||||

import java.io.IOException;

|

||||

import java.nio.file.Files;

|

||||

import java.util.ArrayList;

|

||||

import java.util.Base64;

|

||||

import java.util.List;

|

||||

|

||||

/** Helper class for creating {@link OllamaGenerateRequest} objects using the builder-pattern. */

|

||||

public class OllamaGenerateRequestBuilder {

|

||||

|

||||

private OllamaGenerateRequestBuilder() {

|

||||

request = new OllamaGenerateRequest();

|

||||

}

|

||||

|

||||

private OllamaGenerateRequest request;

|

||||

|

||||

public static OllamaGenerateRequestBuilder builder() {

|

||||

return new OllamaGenerateRequestBuilder();

|

||||

}

|

||||

|

||||

public OllamaGenerateRequest build() {

|

||||

return request;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withPrompt(String prompt) {

|

||||

request.setPrompt(prompt);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withTools(List<Tools.Tool> tools) {

|

||||

request.setTools(tools);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withModel(String model) {

|

||||

request.setModel(model);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withGetJsonResponse() {

|

||||

this.request.setFormat("json");

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withOptions(Options options) {

|

||||

this.request.setOptions(options.getOptionsMap());

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withTemplate(String template) {

|

||||

this.request.setTemplate(template);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withStreaming(boolean streaming) {

|

||||

this.request.setStream(streaming);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withKeepAlive(String keepAlive) {

|

||||

this.request.setKeepAlive(keepAlive);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withRaw(boolean raw) {

|

||||

this.request.setRaw(raw);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withThink(boolean think) {

|

||||

this.request.setThink(think);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withUseTools(boolean useTools) {

|

||||

this.request.setUseTools(useTools);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withFormat(java.util.Map<String, Object> format) {

|

||||

this.request.setFormat(format);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withSystem(String system) {

|

||||

this.request.setSystem(system);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withContext(String context) {

|

||||

this.request.setContext(context);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withImagesBase64(java.util.List<String> images) {

|

||||

this.request.setImages(images);

|

||||

return this;

|

||||

}

|

||||

|

||||

public OllamaGenerateRequestBuilder withImages(java.util.List<File> imageFiles)

|

||||

throws IOException {

|

||||

java.util.List<String> images = new ArrayList<>();

|

||||

for (File imageFile : imageFiles) {

|

||||

images.add(Base64.getEncoder().encodeToString(Files.readAllBytes(imageFile.toPath())));

|

||||

}

|

||||

this.request.setImages(images);

|

||||

return this;

|

||||

}

|

||||

}

|

||||

@ -96,6 +96,7 @@ public class OllamaChatEndpointCaller extends OllamaEndpointCaller {

|

||||

getRequestBuilderDefault(uri).POST(body.getBodyPublisher());

|

||||

HttpRequest request = requestBuilder.build();

|

||||

LOG.debug("Asking model: {}", body);

|

||||

System.out.println("Asking model: " + Utils.toJSON(body));

|

||||

HttpResponse<InputStream> response =

|

||||

httpClient.send(request, HttpResponse.BodyHandlers.ofInputStream());

|

||||

|

||||

|

||||

@ -18,6 +18,7 @@ import io.github.ollama4j.models.chat.*;

|

||||

import io.github.ollama4j.models.embed.OllamaEmbedRequest;

|

||||

import io.github.ollama4j.models.embed.OllamaEmbedResult;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequest;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequestBuilder;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateStreamObserver;

|

||||

import io.github.ollama4j.models.response.Model;

|

||||

import io.github.ollama4j.models.response.ModelDetail;

|

||||

@ -271,7 +272,7 @@ class OllamaIntegrationTest {

|

||||

format.put("required", List.of("isNoon"));

|

||||

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(TOOLS_MODEL)

|

||||

.withPrompt(prompt)

|

||||

.withFormat(format)

|

||||

@ -298,7 +299,7 @@ class OllamaIntegrationTest {

|

||||

boolean raw = false;

|

||||

boolean thinking = false;

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(GENERAL_PURPOSE_MODEL)

|

||||

.withPrompt(

|

||||

"What is the capital of France? And what's France's connection with"

|

||||

@ -326,7 +327,7 @@ class OllamaIntegrationTest {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

boolean raw = false;

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(GENERAL_PURPOSE_MODEL)

|

||||

.withPrompt(

|

||||

"What is the capital of France? And what's France's connection with"

|

||||

@ -356,7 +357,8 @@ class OllamaIntegrationTest {

|

||||

void shouldGenerateWithCustomOptions() throws OllamaException {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.SYSTEM,

|

||||

@ -388,7 +390,8 @@ class OllamaIntegrationTest {

|

||||

|

||||

String expectedResponse = "Bhai";

|

||||

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.SYSTEM,

|

||||

@ -426,7 +429,8 @@ class OllamaIntegrationTest {

|

||||

@Order(10)

|

||||

void shouldChatWithHistory() throws Exception {

|

||||

api.pullModel(THINKING_TOOL_MODEL);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(THINKING_TOOL_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(THINKING_TOOL_MODEL);

|

||||

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

@ -477,7 +481,8 @@ class OllamaIntegrationTest {

|

||||

void shouldChatWithExplicitTool() throws OllamaException {

|

||||

String theToolModel = TOOLS_MODEL;

|

||||

api.pullModel(theToolModel);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(theToolModel);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(theToolModel);

|

||||

|

||||

api.registerTool(EmployeeFinderToolSpec.getSpecification());

|

||||

|

||||

@ -529,7 +534,8 @@ class OllamaIntegrationTest {

|

||||

void shouldChatWithExplicitToolAndUseTools() throws OllamaException {

|

||||

String theToolModel = TOOLS_MODEL;

|

||||

api.pullModel(theToolModel);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(theToolModel);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(theToolModel);

|

||||

|

||||

api.registerTool(EmployeeFinderToolSpec.getSpecification());

|

||||

|

||||

@ -573,7 +579,8 @@ class OllamaIntegrationTest {

|

||||

String theToolModel = TOOLS_MODEL;

|

||||

api.pullModel(theToolModel);

|

||||

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(theToolModel);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(theToolModel);

|

||||

|

||||

api.registerTool(EmployeeFinderToolSpec.getSpecification());

|

||||

|

||||

@ -626,7 +633,8 @@ class OllamaIntegrationTest {

|

||||

void shouldChatWithAnnotatedToolSingleParam() throws OllamaException {

|

||||

String theToolModel = TOOLS_MODEL;

|

||||

api.pullModel(theToolModel);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(theToolModel);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(theToolModel);

|

||||

|

||||

api.registerAnnotatedTools();

|

||||

|

||||

@ -672,7 +680,8 @@ class OllamaIntegrationTest {

|

||||

void shouldChatWithAnnotatedToolMultipleParams() throws OllamaException {

|

||||

String theToolModel = TOOLS_MODEL;

|

||||

api.pullModel(theToolModel);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(theToolModel);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(theToolModel);

|

||||

|

||||

api.registerAnnotatedTools(new AnnotatedTool());

|

||||

|

||||

@ -703,7 +712,8 @@ class OllamaIntegrationTest {

|

||||

void shouldChatWithStream() throws OllamaException {

|

||||

api.deregisterTools();

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

@ -729,7 +739,8 @@ class OllamaIntegrationTest {

|

||||

@Order(15)

|

||||

void shouldChatWithThinkingAndStream() throws OllamaException {

|

||||

api.pullModel(THINKING_TOOL_MODEL_2);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(THINKING_TOOL_MODEL_2);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(THINKING_TOOL_MODEL_2);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

@ -747,6 +758,32 @@ class OllamaIntegrationTest {

|

||||

assertNotNull(chatResult.getResponseModel().getMessage().getResponse());

|

||||

}

|

||||

|

||||

/**

|

||||

* Tests chat API with an image input from a URL.

|

||||

*

|

||||

* <p>Scenario: Sends a user message with an image URL and verifies the assistant's response.

|

||||

* Usage: chat, vision model, image from URL, no tools, no thinking, no streaming.

|

||||

*/

|

||||

@Test

|

||||

@Order(10)

|

||||

void shouldChatWithImageFromURL() throws OllamaException, IOException, InterruptedException {

|

||||

api.pullModel(VISION_MODEL);

|

||||

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(VISION_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

"What's in the picture?",

|

||||

Collections.emptyList(),

|

||||

"https://t3.ftcdn.net/jpg/02/96/63/80/360_F_296638053_0gUVA4WVBKceGsIr7LNqRWSnkusi07dq.jpg")

|

||||

.build();

|

||||

api.registerAnnotatedTools(new OllamaIntegrationTest());

|

||||

|

||||

OllamaChatResult chatResult = api.chat(requestModel, null);

|

||||

assertNotNull(chatResult);

|

||||

}

|

||||

|

||||

/**

|

||||

* Tests chat API with an image input from a file and multi-turn history.

|

||||

*

|

||||

@ -758,7 +795,8 @@ class OllamaIntegrationTest {

|

||||

@Order(10)

|

||||

void shouldChatWithImageFromFileAndHistory() throws OllamaException {

|

||||

api.pullModel(VISION_MODEL);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(VISION_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(VISION_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

@ -794,7 +832,7 @@ class OllamaIntegrationTest {

|

||||

api.pullModel(VISION_MODEL);

|

||||

try {

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(VISION_MODEL)

|

||||

.withPrompt("What is in this image?")

|

||||

.withRaw(false)

|

||||

@ -827,7 +865,7 @@ class OllamaIntegrationTest {

|

||||

void shouldGenerateWithImageFilesAndResponseStreamed() throws OllamaException, IOException {

|

||||

api.pullModel(VISION_MODEL);

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(VISION_MODEL)

|

||||

.withPrompt("What is in this image?")

|

||||

.withRaw(false)

|

||||

@ -862,7 +900,7 @@ class OllamaIntegrationTest {

|

||||

boolean think = true;

|

||||

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(THINKING_TOOL_MODEL)

|

||||

.withPrompt("Who are you?")

|

||||

.withRaw(raw)

|

||||

@ -891,7 +929,7 @@ class OllamaIntegrationTest {

|

||||

api.pullModel(THINKING_TOOL_MODEL);

|

||||

boolean raw = false;

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(THINKING_TOOL_MODEL)

|

||||

.withPrompt("Who are you?")

|

||||

.withRaw(raw)

|

||||

@ -929,7 +967,7 @@ class OllamaIntegrationTest {

|

||||

boolean raw = true;

|

||||

boolean thinking = false;

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(GENERAL_PURPOSE_MODEL)

|

||||

.withPrompt("What is 2+2?")

|

||||

.withRaw(raw)

|

||||

@ -957,7 +995,7 @@ class OllamaIntegrationTest {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

boolean raw = true;

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(GENERAL_PURPOSE_MODEL)

|

||||

.withPrompt("What is the largest planet in our solar system?")

|

||||

.withRaw(raw)

|

||||

@ -990,7 +1028,7 @@ class OllamaIntegrationTest {

|

||||

// 'response' tokens

|

||||

boolean raw = true;

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(THINKING_TOOL_MODEL)

|

||||

.withPrompt(

|

||||

"Count 1 to 5. Just give me the numbers and do not give any other"

|

||||

@ -1055,7 +1093,7 @@ class OllamaIntegrationTest {

|

||||

format.put("required", List.of("cities"));

|

||||

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(TOOLS_MODEL)

|

||||

.withPrompt(prompt)

|

||||

.withFormat(format)

|

||||

@ -1081,7 +1119,8 @@ class OllamaIntegrationTest {

|

||||

@Order(26)

|

||||

void shouldChatWithThinkingNoStream() throws OllamaException {

|

||||

api.pullModel(THINKING_TOOL_MODEL);

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(THINKING_TOOL_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(THINKING_TOOL_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

@ -1110,7 +1149,8 @@ class OllamaIntegrationTest {

|

||||

void shouldChatWithCustomOptionsAndStreaming() throws OllamaException {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

@ -1144,7 +1184,8 @@ class OllamaIntegrationTest {

|

||||

|

||||

api.registerTool(EmployeeFinderToolSpec.getSpecification());

|

||||

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(THINKING_TOOL_MODEL_2);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(THINKING_TOOL_MODEL_2);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

@ -1178,7 +1219,8 @@ class OllamaIntegrationTest {

|

||||

File image1 = getImageFileFromClasspath("emoji-smile.jpeg");

|

||||

File image2 = getImageFileFromClasspath("roses.jpg");

|

||||

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(VISION_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(VISION_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

OllamaChatMessageRole.USER,

|

||||

@ -1205,7 +1247,7 @@ class OllamaIntegrationTest {

|

||||

void shouldHandleNonExistentModel() {

|

||||

String nonExistentModel = "this-model-does-not-exist:latest";

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(nonExistentModel)

|

||||

.withPrompt("Hello")

|

||||

.withRaw(false)

|

||||

@ -1232,7 +1274,8 @@ class OllamaIntegrationTest {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

|

||||

List<OllamaChatToolCalls> tools = Collections.emptyList();

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(OllamaChatMessageRole.USER, " ", tools) // whitespace only

|

||||

.build();

|

||||

@ -1255,7 +1298,7 @@ class OllamaIntegrationTest {

|

||||

void shouldGenerateWithExtremeParameters() throws OllamaException {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(GENERAL_PURPOSE_MODEL)

|

||||

.withPrompt("Generate a random word")

|

||||

.withRaw(false)

|

||||

@ -1308,7 +1351,8 @@ class OllamaIntegrationTest {

|

||||

void shouldChatWithKeepAlive() throws OllamaException {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

|

||||

OllamaChatRequest builder = OllamaChatRequest.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder().withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(OllamaChatMessageRole.USER, "Hello, how are you?")

|

||||

.withKeepAlive("5m") // Keep model loaded for 5 minutes

|

||||

@ -1332,7 +1376,7 @@ class OllamaIntegrationTest {

|

||||

void shouldGenerateWithAdvancedOptions() throws OllamaException {

|

||||

api.pullModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(GENERAL_PURPOSE_MODEL)

|

||||

.withPrompt("Write a detailed explanation of machine learning")

|

||||

.withRaw(false)

|

||||

@ -1377,8 +1421,8 @@ class OllamaIntegrationTest {

|

||||

new Thread(

|

||||

() -> {

|

||||

try {

|

||||

OllamaChatRequest builder =

|

||||

OllamaChatRequest.builder()

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder()

|

||||

.withModel(GENERAL_PURPOSE_MODEL);

|

||||

OllamaChatRequest requestModel =

|

||||

builder.withMessage(

|

||||

|

||||

@ -13,6 +13,7 @@ import static org.junit.jupiter.api.Assertions.*;

|

||||

import io.github.ollama4j.Ollama;

|

||||

import io.github.ollama4j.exceptions.OllamaException;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequest;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequestBuilder;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateStreamObserver;

|

||||

import io.github.ollama4j.models.response.OllamaResult;

|

||||

import io.github.ollama4j.samples.AnnotatedTool;

|

||||

@ -204,7 +205,7 @@ public class WithAuth {

|

||||

format.put("required", List.of("isNoon"));

|

||||

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(model)

|

||||

.withPrompt(prompt)

|

||||

.withRaw(false)

|

||||

|

||||

@ -19,6 +19,7 @@ import io.github.ollama4j.models.chat.OllamaChatMessageRole;

|

||||

import io.github.ollama4j.models.embed.OllamaEmbedRequest;

|

||||

import io.github.ollama4j.models.embed.OllamaEmbedResult;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequest;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequestBuilder;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateStreamObserver;

|

||||

import io.github.ollama4j.models.request.CustomModelRequest;

|

||||

import io.github.ollama4j.models.response.ModelDetail;

|

||||

@ -157,7 +158,7 @@ class TestMockedAPIs {

|

||||

OllamaGenerateStreamObserver observer = new OllamaGenerateStreamObserver(null, null);

|

||||

try {

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(model)

|

||||

.withPrompt(prompt)

|

||||

.withRaw(false)

|

||||

@ -179,7 +180,7 @@ class TestMockedAPIs {

|

||||

String prompt = "some prompt text";

|

||||

try {

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(model)

|

||||

.withPrompt(prompt)

|

||||

.withRaw(false)

|

||||

@ -205,7 +206,7 @@ class TestMockedAPIs {

|

||||

String prompt = "some prompt text";

|

||||

try {

|

||||

OllamaGenerateRequest request =

|

||||

OllamaGenerateRequest.builder()

|

||||

OllamaGenerateRequestBuilder.builder()

|

||||

.withModel(model)

|

||||

.withPrompt(prompt)

|

||||

.withRaw(false)

|

||||

|

||||

@ -12,14 +12,15 @@ import static org.junit.jupiter.api.Assertions.*;

|

||||

|

||||

import io.github.ollama4j.models.chat.OllamaChatMessageRole;

|

||||

import io.github.ollama4j.models.chat.OllamaChatRequest;

|

||||

import io.github.ollama4j.models.chat.OllamaChatRequestBuilder;

|

||||

import org.junit.jupiter.api.Test;

|

||||

|

||||

class TestOllamaChatRequestBuilder {

|

||||

|

||||

@Test

|

||||

void testResetClearsMessagesButKeepsModelAndThink() {

|

||||

OllamaChatRequest builder =

|

||||

OllamaChatRequest.builder()

|

||||

OllamaChatRequestBuilder builder =

|

||||

OllamaChatRequestBuilder.builder()

|

||||

.withModel("my-model")

|

||||

.withThinking(true)

|

||||

.withMessage(OllamaChatMessageRole.USER, "first");

|

||||

|

||||

@ -13,6 +13,7 @@ import static org.junit.jupiter.api.Assertions.assertThrowsExactly;

|

||||

|

||||

import io.github.ollama4j.models.chat.OllamaChatMessageRole;

|

||||

import io.github.ollama4j.models.chat.OllamaChatRequest;

|

||||

import io.github.ollama4j.models.chat.OllamaChatRequestBuilder;

|

||||

import io.github.ollama4j.utils.OptionsBuilder;

|

||||

import java.io.File;

|

||||

import java.util.Collections;

|

||||

@ -23,11 +24,11 @@ import org.junit.jupiter.api.Test;

|

||||

|

||||

public class TestChatRequestSerialization extends AbstractSerializationTest<OllamaChatRequest> {

|

||||

|

||||

private OllamaChatRequest builder;

|

||||

private OllamaChatRequestBuilder builder;

|

||||

|

||||

@BeforeEach

|

||||

public void init() {

|

||||

builder = OllamaChatRequest.builder().withModel("DummyModel");

|

||||

builder = OllamaChatRequestBuilder.builder().withModel("DummyModel");

|

||||

}

|

||||

|

||||

@Test

|

||||

|

||||

@ -11,6 +11,7 @@ package io.github.ollama4j.unittests.jackson;

|

||||

import static org.junit.jupiter.api.Assertions.assertEquals;

|

||||

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequest;

|

||||

import io.github.ollama4j.models.generate.OllamaGenerateRequestBuilder;

|

||||

import io.github.ollama4j.utils.OptionsBuilder;

|

||||

import org.json.JSONObject;

|

||||

import org.junit.jupiter.api.BeforeEach;

|

||||

@ -18,17 +19,16 @@ import org.junit.jupiter.api.Test;

|

||||

|

||||

class TestGenerateRequestSerialization extends AbstractSerializationTest<OllamaGenerateRequest> {

|

||||

|

||||

private OllamaGenerateRequest builder;

|

||||

private OllamaGenerateRequestBuilder builder;

|

||||

|

||||

@BeforeEach

|

||||

public void init() {

|

||||

builder = OllamaGenerateRequest.builder().withModel("Dummy Model");

|

||||

builder = OllamaGenerateRequestBuilder.builder().withModel("Dummy Model");

|

||||

}

|

||||

|

||||

@Test

|

||||

public void testRequestOnlyMandatoryFields() {

|

||||

OllamaGenerateRequest req =

|

||||

builder.withPrompt("Some prompt").withModel("Dummy Model").build();

|

||||

OllamaGenerateRequest req = builder.withPrompt("Some prompt").build();

|

||||

|

||||

String jsonRequest = serialize(req);

|

||||

assertEqualsAfterUnmarshalling(deserialize(jsonRequest, OllamaGenerateRequest.class), req);

|

||||

@ -38,10 +38,7 @@ class TestGenerateRequestSerialization extends AbstractSerializationTest<OllamaG

|

||||

public void testRequestWithOptions() {

|

||||

OptionsBuilder b = new OptionsBuilder();

|

||||

OllamaGenerateRequest req =

|

||||

builder.withPrompt("Some prompt")

|

||||

.withOptions(b.setMirostat(1).build())

|

||||

.withModel("Dummy Model")

|

||||

.build();

|

||||

builder.withPrompt("Some prompt").withOptions(b.setMirostat(1).build()).build();

|

||||

|

||||

String jsonRequest = serialize(req);

|

||||

OllamaGenerateRequest deserializeRequest =

|

||||

@ -52,11 +49,7 @@ class TestGenerateRequestSerialization extends AbstractSerializationTest<OllamaG

|

||||

|

||||

@Test

|

||||

public void testWithJsonFormat() {

|

||||

OllamaGenerateRequest req =

|

||||